Two ways to use this template

- 1. Click "Copy prompt" below

- 2. Paste into Cursor, Claude Code, Codex, or any coding agent

- 3. Your agent builds the app — it asks questions along the way so the result is exactly what you want

Follow the steps below to set things up manually, at your own pace.

Inventory Intelligence

Retail inventory management with AI-powered demand forecasting, replenishment recommendations, and optional Genie analytics. Built on a live medallion pipeline synced to Lakebase.

Includes a working starter app

Real, runnable code lives on GitHub. When you copy the prompt above, your coding agent clones it as the starting point and adapts it to your data and use case.

examples/inventory-intelligence/template/Inventory Intelligence

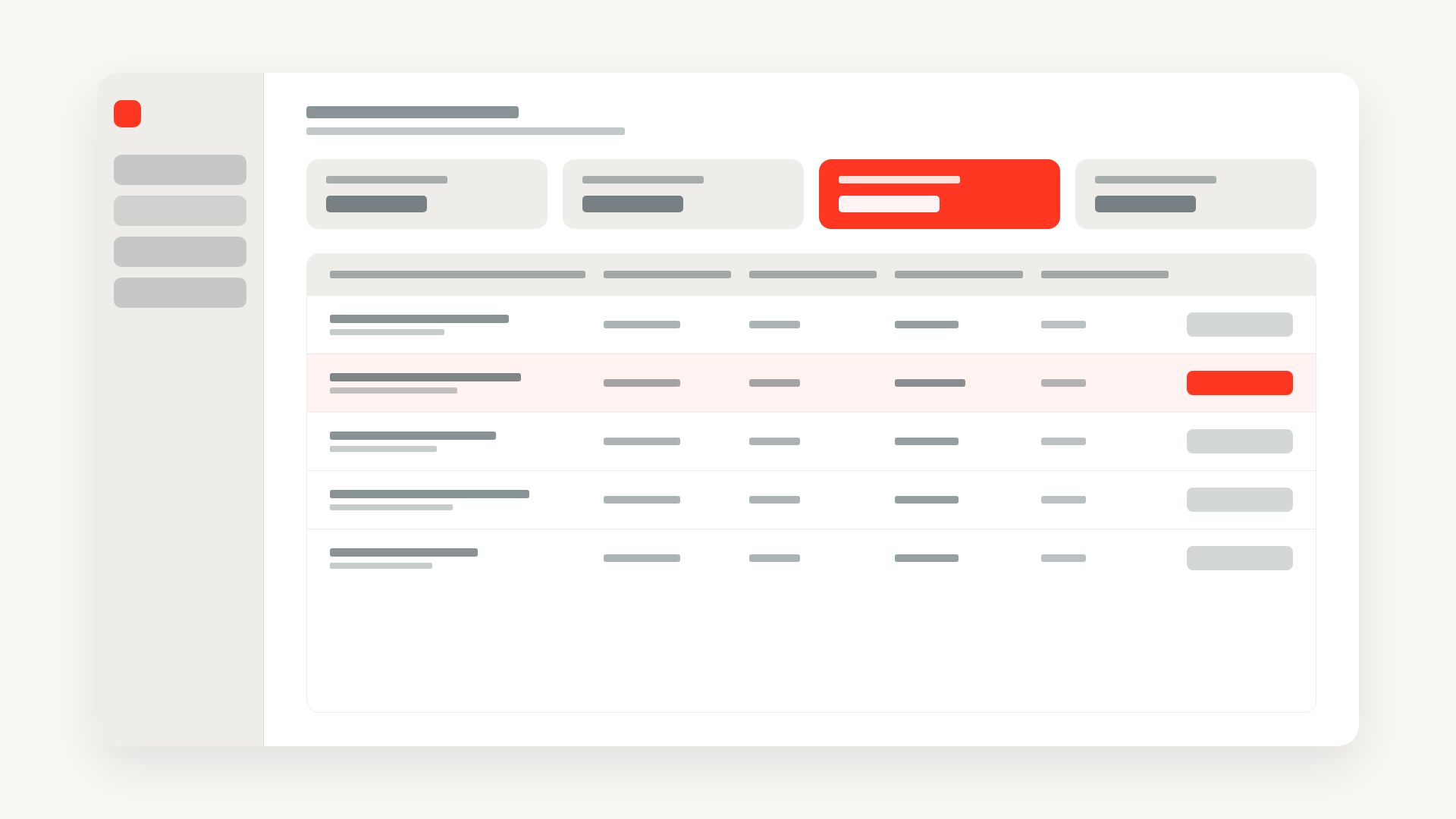

This template builds a full retail inventory management system on the Databricks stack: a React app where store managers monitor stock health, review AI-generated replenishment recommendations, and approve purchase orders — all powered by a live medallion pipeline and pluggable demand forecast job.

Setup — interview the user

Before doing anything else, ask the user these questions one at a time. Wait for each answer before asking the next. Use the answers to configure databricks.yml, the seed scripts, and the deploy commands.

- Databricks workspace URL — ask: "What is your Databricks workspace URL? (e.g.

https://dbc-xxxx.cloud.databricks.com— rundatabricks auth envto find it)" - CLI profile — ask: "Which Databricks CLI profile should I use? (run

databricks auth profilesto list them; press Enter to useDEFAULT)" - Unity Catalog catalog name — ask: "What is your Unity Catalog catalog name? The pipeline will write silver and gold Delta tables there (e.g.

my_catalog)" - SQL Warehouse ID — ask: "What is your SQL Warehouse ID? (run

databricks warehouses list --output jsonor find it in the warehouse settings URL — if you don't have one, I can create a serverless warehouse for you)" - Lakebase — ask: "Do you already have a Lakebase project and database set up? If yes, share the branch resource name (e.g.

projects/my-project/branches/production) and database resource name. If no, I'll walk you through creating one." - Data mode — ask: "Do you want demo data (5 stores, controlled stock scenarios, great for demos) or realistic randomized data seeded from scratch?"

- Genie analytics tab — ask: "Do you want the optional AI/BI Genie chat tab in the app? (If yes, the Genie space will be created automatically — this requires running the sample data pipeline first: data generator → DLT analytics → forecast job, ~10–15 min. This happens as part of the deploy.)"

- Demand forecast model — ask: "Which demand forecast model would you like? Options:

weighted_moving_average(default, no extra infra),exponential_smoothing,prophet, ormodel_serving(requires a Model Serving endpoint)"

Once all answers are collected:

- Update

databricks.yml— setworkspace.host,sql_warehouse_id,postgres_branch,postgres_database,catalog,forecast_modelin the appropriate target(s). - Run the deploy:

- Randomized data (with or without Genie):

./deploy.sh --profile <profile> --target full --sample-data - Demo data without Genie:

./deploy.sh --profile <profile> --target demo - Demo data with Genie: run

--target full --sample-datafirst (creates the DLT pipeline and UC gold tables Genie needs), then./deploy.sh --profile <profile> --target demoto load controlled demo data and wire up the Genie space

- Randomized data (with or without Genie):

deploy.shhandles Genie automatically: it checks whether UC gold tables exist, runs the sample data pipeline if not, creates the Genie space, patchesdatabricks.ymlwith the new space ID (in the correct target section), and redeploys with the Genie resource bound.

If the user needs a new SQL Warehouse, create a serverless one:

databricks warehouses create --profile <profile> --name "inventory-intelligence" \

--cluster-size Small --auto-stop-mins 30 --max-num-clusters 1 \

--enable-serverless-compute

Use the id from the response as the warehouse ID.

Data Flow

Sales and stock data flow from Lakebase Postgres through the lakehouse, get enriched by a demand forecast model, and are served back to the app through reverse sync:

- OLTP writes land in Lakebase Postgres (stores, products, stock levels, sales transactions, replenishment orders).

- Lakehouse Sync replicates every change into Unity Catalog as CDC history tables (bronze layer).

- A Lakeflow Declarative Pipeline transforms CDC history into current-state silver tables and gold materialized views (inventory overview, low stock alerts, sales velocity).

- A Lakeflow Job runs on a schedule, loads the silver sales history, and runs a pluggable demand forecast model to produce 30-day unit forecasts and replenishment recommendations in a Delta gold table.

- Sync Tables (reverse sync) replicate the gold tables back into Lakebase for low-latency reads.

- The Inventory Intelligence App (Databricks App) reads from both OLTP and synced gold tables to show dashboards, store drill-downs, a replenishment queue, and optional Genie analytics.

Design

The app should have a beautiful, polished design — clean typography, consistent spacing, and a professional retail aesthetic. Use shadcn/ui components as the foundation, Tailwind for all styling, and brand colors throughout. Dashboards should feel data-rich but uncluttered; the replenishment queue should make approval workflows feel effortless.

What to Adapt

Provisioning (Unity Catalog schemas, Lakebase REPLICA IDENTITY), seeding, pipeline deploys, reverse sync, and app deploy are documented in the repository's template/README.md alongside the code.

To make this template your own:

- Catalog: Set the

catalogvariable in each pipeline'sdatabricks.ymlto your Unity Catalog catalog name. - Lakebase: Point the app's

databricks.ymlat your own Lakebase project, branch, and database. - Tables: The seed script creates the OLTP schema with 5 stores, 25 products, and 90 days of sales history. After seeding, configure Lakehouse Sync to replicate the

inventoryschema tables. - Sync Tables: Manually create the three reverse sync configurations (see the README for the exact table mappings).

- Forecast Model: Set the

forecast_modelvariable in the demand forecast pipeline toweighted_moving_average(default),exponential_smoothing,prophet, ormodel_serving. - Genie Space: Create a Genie space over your gold tables and set the

genie_space_idin the app bundle to activate the Analytics tab.

Built on these templates

This example's codebase and the agent prompt above both build on top of the templates below. Open one to dive into a specific technique on its own or apply it to a different project.

End-to-end setup for analyzing operational database data in the lakehouse: Unity Catalog with external storage, Lakebase provisioning, Lakehouse Sync CDC replication, and a medallion architecture pipeline with silver and gold layers.

Wire up a Databricks App with Lakebase for persistent data storage. Includes schema setup and full CRUD API routes.

Embed a Databricks AI/BI Genie chat interface so users can explore data through natural language. Configure a Genie space, wire up server and client plugins, declare app resources, and deploy.