Databricks Repos 一般公開、Files の新機能も一般プレビュー

Git を使った開発が便利になりました。Files の新機能が、開発、コードの再利用、環境管理をシンプルにします。

によって Ka-Hing Cheung 、 ヴァイバヴ・セティ による投稿

Databricks Repos は、一般プレビューとして利用可能になって以来、Databricks の数千のユーザーの皆様によって、開発やプロダクションワークフローの標準化に活用いただいています。その Databricks Repos を本日一般公開しました。

Databricks Repos は、データチームが常に抱えている課題を解決するために開発されました。データエンジニア、データサイエンティストが使用しているツールの多くは、Git のバージョン管理システムとの連携が不十分、あるいは全くありません。コードをレビュー、コミットするだけでも、数多くのファイル、ステップ、UIをナビゲートする必要がありました。これでは時間がかかるだけでなく、エラーを発生しやすくします。

Repos は、Databricks と一般的な Git プロバイダーを直接リポジトリレベルで統合することで、データの実践者は新規の Git リポジトリや既存リポジトリをクローンの作成、Git オペレーションの実行、開発のベストプラクティスに従うことを容易にします。

Databricks Repos によって、ブランチの管理、リモートの変更のプル、コミット前の変更点の確認など、使い慣れた Git の機能にアクセスができるため、Git ベースの開発ワークフローを容易に活用できるようになります。さらに、Repos は Github、Bitbucket、Gitlab、Microsoft Azure DevOps のような幅広い Git プロバイダをサポートしており、CI/CDシステムと統合を可能にする一連の API も提供しています。

新機能:Files in Repos

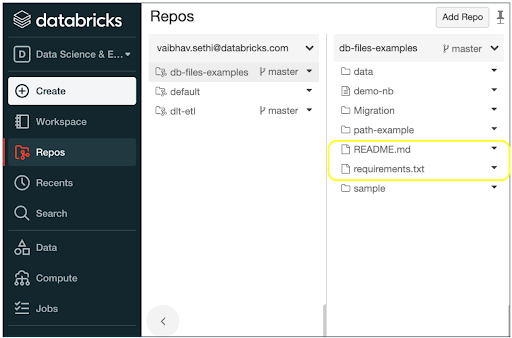

今回、私たちは、Databricks 上で Python ソースコード、ライブラリファイル、設定ファイル、環境設定ファイル、小規模のデータファイルなど、ノートブックファイル以外のファイルを扱うことができる Repos の新機能も発表しました。「Files in Repos」と呼ばれるこの機能は、コードの再利用、環境管理、デプロイメントの自動化を容易にします。ユーザーはローカルファイルシステムと同じように、Databricks Repos のファイルに対して、インポート(クローン)、読み込み、編集が可能です。この機能は現在(2021 年 10 月時点)一般プレビューでご利用いただけます。

Files in Repos は、シンプルかつ標準に準拠した開発環境を提供します。一般的な開発ワークフローにおいてどのように役立つのかを見ていきましょう。

Files in Repos のメリット:

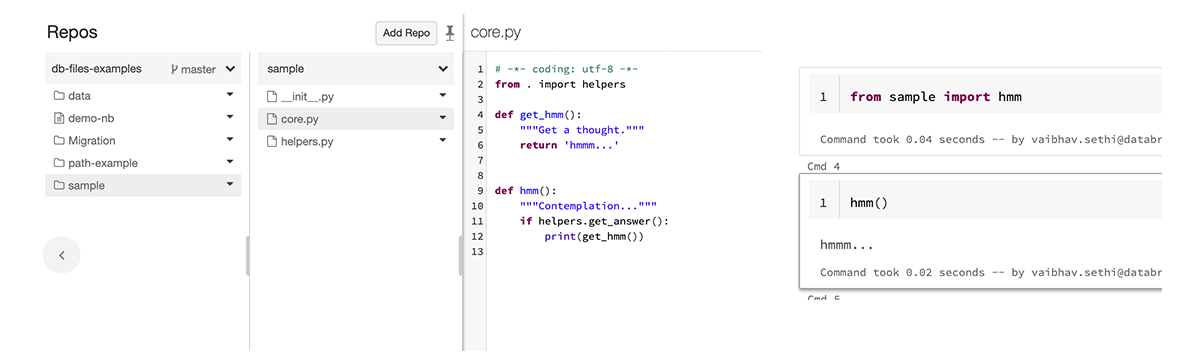

コード再利用が容易に

Python と R モジュールを Repos やノートブックに配置することができ、Repos はその機能を import 文を用いて関数を参照できます。Python の関数を参照するたびに新しいノートブックを作成したり、モジュールをパッケージング (python における whl など)してクラスターライブラリとしてインストールする必要はありません。Files in Repos はこれら全てのステップ(さらに多くのステップ��)を 1 行のコードで実現します。

環境管理と本番デプロイを自動化

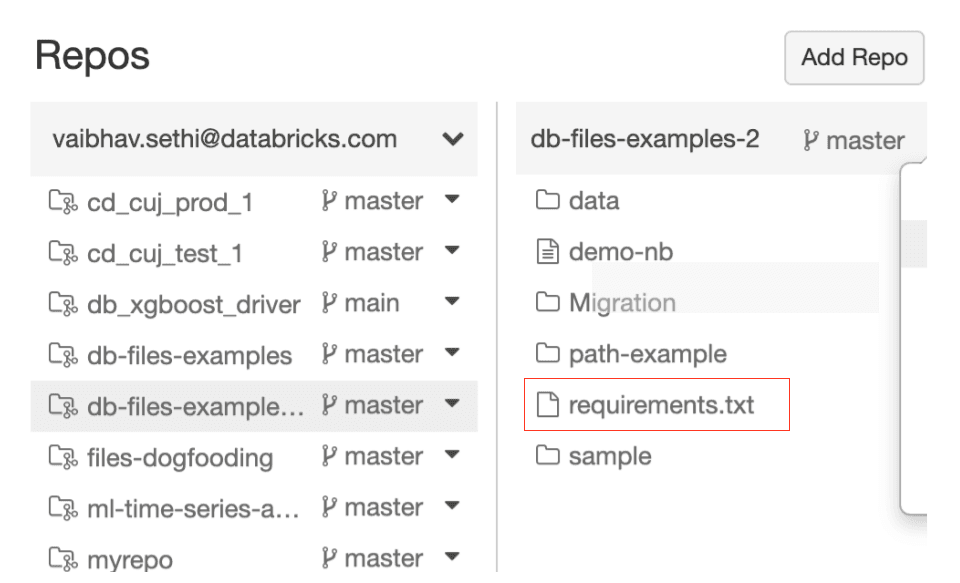

- 環境設定をコードと一緒に格納:Repo に requirements.txt のような環境設定ファイルを格納することができ、%pip install -r requirements.txt で必要なライブラリ依存関係をインストールできます。これによって、手動による環境管理の重荷を軽減し、エラーや不一致が排除されます。

- デプロイの自動化:Repo にジョブやクラスタなどの Databricks のリソース設定を格納し、これらのリソースのデプロイメントを自動化してプロダクションの環境を厳密にコントロールできます。

- 設定ファイルのバージョン管理:お使いの設定ファイルには、環境設定、リソース設定に加え、アルゴリズムのパラメーター、ビジネスロジックに対する入力データなどが含まれるケースがあります。Repos を用いることで、常にファイルの適切なバージョンの使用が保証され、エラーを排除するために main ブランチや特定のタグを用いることができます。

まとめると、Databricks を用いることで、データチームはコードのバージョン管理やプロダクション移行のためにアドホックなプロセスを構築する必要がなくなります。Databricks Repos はデータチームの Git オペレーションを自動化し、企業で確立された CI/CD パイプラインとの密連携を実現します。新機能 File in Repos によって、コードの可搬性を高めるためのライブラリのインポートや環境設定ファイルのバージョン管理、小規模なデータファイルの編集が可能になります。

使ってみる

Repos は現在一般公開(GA)されています。サイドバーの Repos ボタンから、または Repos API から使用できます。

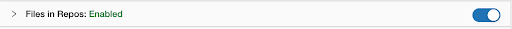

Files in Repos の機能は現在一般プレビューで利用できます。有効化するには、Databricks ワークスペースからAdmin パネル → Advanced に移動し、"Files in Repos" の隣にある "Enable" をクリックしてください。詳しくはドキュメントを参照してください。

Databricks が ML ライフサイクルの各ステップを自動化し、データチームの開発をシンプルにする方法について、Databricks アーキテクトの Rafi Kuralisnik がオンデマンド Web セミナーで解説しています。ぜひご覧ください。

最新の投稿を受信トレイで受け取る

ブログを購読して、最新の投稿を受信トレイにお届けします。