アイテムマッチングは、オンラインマーケットプレイスの中核的な機能です。小売業者は、最適化された顧客エクスペリエンスを提供すべく、新規/更新された商品情報を既存のリストと比較して、一貫性を確保し、重複を回避します。また、オンライン小売業者は、競合他社のリストと比較して、価格やインベントリの差異を確認します。複数のサイトで商品を提供しているサプライヤーでは、商品がどのように提示されているかを調べて、自社の基準との整合性を確保できます。

効果的なアイテムマッチングの必要性は、オンランコマースに限られたことではありません。DSR(デマンドシグナルリポジトリ)は、数十年もの間、補充オーダーのデータに POS やシンジゲートされた市場データを組み合わせて、消費財メーカーに需要の全体を把握するケイパビリティを提供してきました。しかし、メーカーが自社の製品定義と、数十もの小売店パートナーの製品説明との間の差異を埋めることができなければ、DSR の価値は制限されます。

このようなタイプのデータをまとめる際の課題は、異なるデータの照合が常に手作業で行われていたことでした。一部の領域では、データセット間の直接的なリンクを可能にする共通の解決策がありますが、ほとんどのシナリオではそうではありません。専門的な知識を用いて、どのアイテムがペアになる可能性があり、どれが別個であるかを判断する必要があります。このため、複雑なデータプロジェクトでは、異なるデータセット間でアイテムを照合させる作業が最も時間のかかるステップであることが多く、新規商品が追加されるたびにこの作業を繰り返さなければなりません。

商品コードの標準化については、1970 年代から継続的かつ数多く試行されていることからも、この課題が普遍性であり、困難であることがわかります。ルールベースおよび確率的(ファジー)マッチング技術は、不完全なデータに対して合理的に効果的な商品マッチングを実行するソフトウェアの可能性を示してきました。しかし、多くの場合、これらのツールは、サポートするデータ、カスタマイズおよびスケーリングの能力の点で制限されています。機械学習、ビッグデータプラットフォーム、クラウドの登場により、これらの技術を進化させ、課題を克服できる可能性が高まりました。

商品の類似性の計算

この計算の仕組みを説明するために、まず、2 つのアイテムを一致させるために商品情報をどのように使用するのかを考えてみましょう。ここでは、abt.com と buy.com の 2 つのリストがあります。ここで参照されている Abt-Buy データセットに示されているように、同じ商品であると判断されたものです。

私たち消費者は、名前を見れば同じような商品を紹介しているサイトだと判断できます。例えば、FDB130WH と FBD130RGS というわずかなコードの違いはありますが、Web サイト上の商品説明や技術仕様などを確認して、これらが同じ機器であると判断可能です。しかし、これと同じ作業をコンピュータが実行するにはどうしたらよいでしょうか。

まず、名前をそれぞれの単語に分割し、大文字/小文字の区別、句読点の排除、無視しても問題のない単語の削除を行い、残りの要素を配列されていない単語の集合体(bag)として処理します。ここでは、一致した商品に対して、視覚的に比較しやすいように単語の並べ替えをしています。

ほとんどの単語が同じであることがわかります。唯一バリエーションがあるのは商品コードで、それも最後の 2、3 文字に発生するバリエーションです。これらの単語を文字列に分解すると(つまり、文字ベースの n-grams)、詳細をさらに容易に比較できます。

各配列は、名前の中での出現頻度、全ての商品名での全体的な出現頻度によってスコア付けされ、出現していない配列はゼロとしてスコア付けされます。

TF-IDF スコアリングと呼ばれるこの自然言語処理(NLP)技術は、文字列比較の問題を数学的な問題に変換できます。文字列の類似性は、整列した値の差の二乗の合計として計算できるようになり、この 2 つの文字列では、約 0.359 です。他の商品の潜在的な一致と比較した場合この値は最も低くなり、実際の一致の可能性を示します。

商品の名前に関するこの一連のステップで、全てを網羅できるわけではありません。特定の領域における特定のパターンの知識があれば、より洗練されたデータ準備のテクニックを使用できる可能性もありますが、最もシンプルなテクニックでも驚くほどの効果を発揮することがあります。

商品説明などの長いテキストの列の場合、TF-IDF のスコア付けを用いた単語ベースの n-grams や、単語の関連性を求めてテキストのブロックを分析するテキスト埋め込みが、より優れたスコア付けアプローチを提供する可能性があります。画像データの場合、同様の埋め込みの手法を適用することで、さらに多くの情報を考慮できます。ウォルマートのような小売業者が実証しているように、商品の類似性を判断するのに役立つ情報であれば、あらゆるものを採用できます。その情報を数値表現に変換するだけで、距離や関連する類�似性の尺度を導き出すことが可能です。

大規模データへの対処

類似性の判断基準を確立したところで、次の課題は、個々の商品を効率的に比較することです。この課題の規模を理解するために、10,000 個の商品 t という比較的小規模なデータセットを、別の 10,000 個と比較してみましょう。徹底的な比較には、1 億の商品ペアを評価する必要があります。クラウドリソースが利用可能であれば、不可能な課題ではありません。しかし、ここでは、効率的な近道をするために、より類似性のあるペアに注目します。

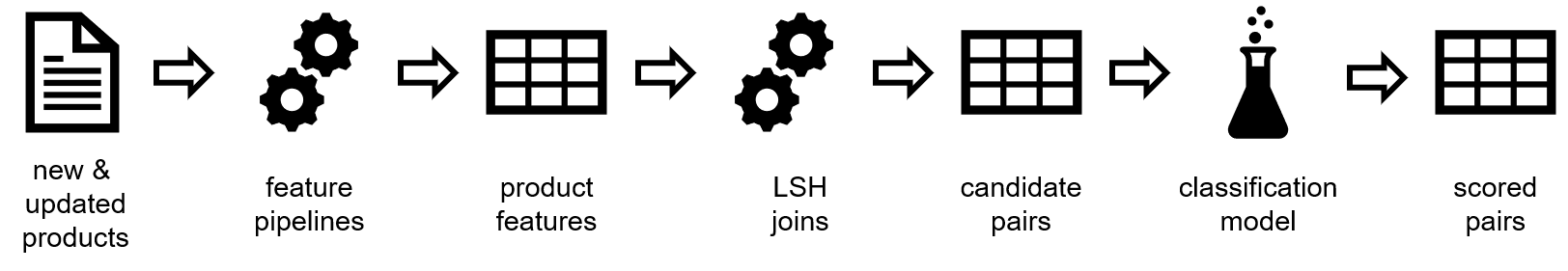

局所性鋭敏型ハッシュ(LSH)は、それを高速かつ効率的にする手法です。LSH プロセスでは、商品をランダムに細分化することで、同様の数値スコアを持つ商品が同じグループに入るようにします。細分化はランダムに行われるため、類似した 2 つの商品が違うグループに入ることもありますが、このプロセスを複数回繰り返すことで、類似した 2 つの商品が少なくとも 1 回は同じグループに入る確率を高めることができます。評価の候補とするには、これで十分です。