Lakehouse for Financial Services Blueprints

Accelerating your path to deploying Lakehouse for Financial Services through automation

by Ricardo Portilla, Antoine Amend and Samir Patel

The Lakehouse architecture has tremendous momentum and is being realized by hundreds of our customers. As a data-driven organization in the regulated industries segment, how often have you wondered whether there is a Lakehouse blueprint, tailored to your unique security and industry needs? This has now arrived.

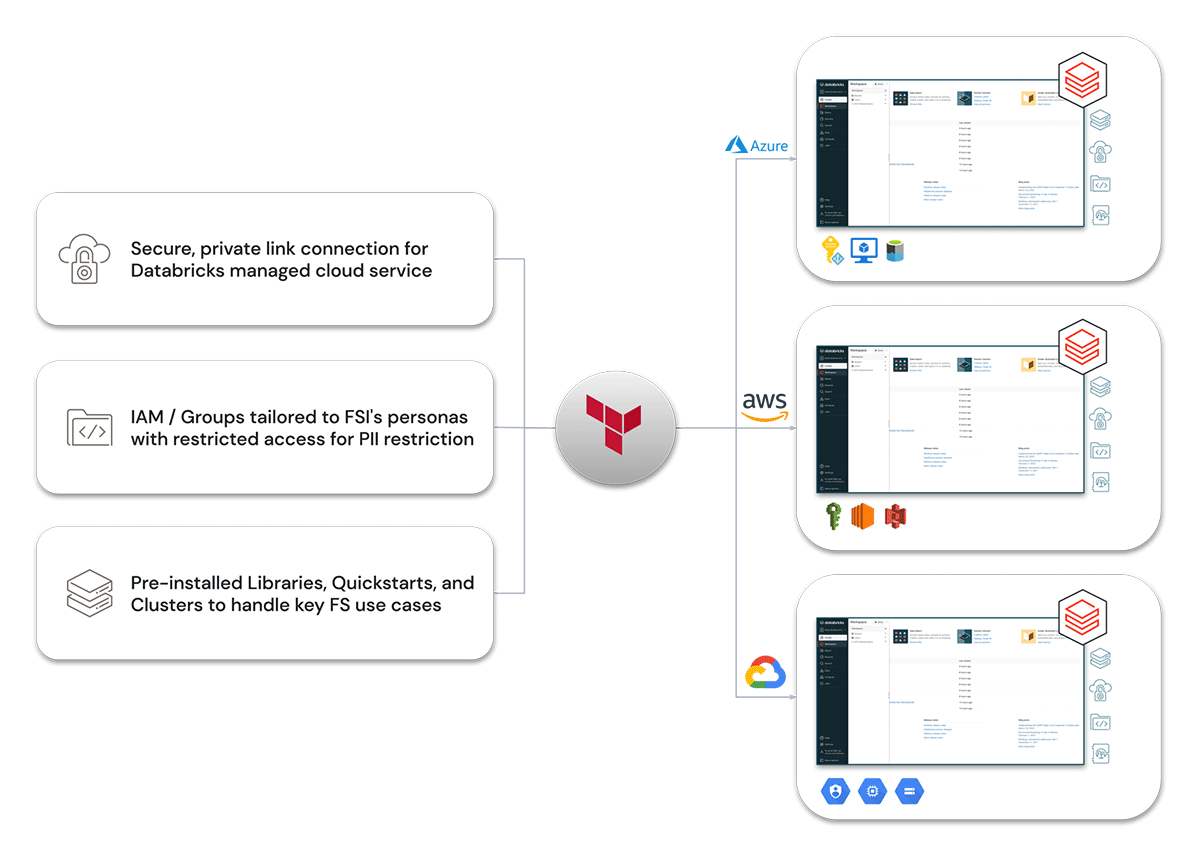

Databricks is excited to introduce a new set of automation templates to deploy a data lakehouse, specifically defined for Financial Services (FS). Lakehouse for FS Blueprints is a set of Terraform templates, specific to Financial Services , that incorporates best practices and patterns from over 600 FS customers. It is tailored for key security and compliance policies and provides FS-specific libraries as quickstarts for key use cases, including Regulatory Reporting and Post-Trade Analytics. You can now be up and running in a matter of minutes vs. weeks or months. All of this work builds upon the widely adopted Databricks Terraform provider, which is deployed at 1,000+ Databricks customers as of this writing.

The core components of the automated deployment templates include:

- Secure connectivity for AWS, Azure and GCP.

- Secure Access to External cloud storage buckets (AWS S3, Azure Blob Storage) access configured to allow for fine grained access permissions based on sensitivity of the data.

- Creation of Databricks Groups tailored to personas across an FSI's organization with restricted access (configurable) that is useful for Personally Identifiable Information (PII) restrictions.

- Pre-installed Libraries, Quickstarts and Clusters to handle key FS use cases, including data quality enforcement, data model schema enforcement and time series ETL packages.

Let's walk through in more detail the key capabilities that the Lakehouse for FS Blueprints provides to accelerate your journey on deploying the Lakehouse architecture.

Security

FSIs have to deal with an increasing number and sophistication of security threats, as well as a continuously evolving regulatory landscape — and all of this is happening as the sheer volume (and importance) of data grows. For FSIs, ensuring data security, privacy and compliance is absolutely critical. Databricks has created Lakehouse for FS Blueprints to better incorporate key security and compliance policies directly into the deployment configuration.

Based on FSI adopters of the Databricks Lakehouse Platform, there are standard security best practices already established in the market. Banking, Insurance, and Capital Markets firms demand features such as secure connectivity (no public IPs), secure communication via cloud backbone (Private Link, for AWS, for example), and well-defined data isolation between groups of users. In our Terraform templates, we have codified all of these best practices for automated deployment.

Data governance

As many FSIs build out their data lakehouse, they're able to democratize their data and make it accessible throughout the organization. FSIs must know how sensitive data is processed and be able to control and audit access to it. To govern data lakes, administrators have often relied on cloud-vendor-specific security controls, such as IAM roles or role-based access control (RBAC) and file-oriented access control, to manage data. We have assumed the need for groups to restrict certain data classifications and encoded these in the workspace setup.

Note that Databricks has launched Unity Catalog in public preview, which brings fine-grained governance and security to lakehouse data using a familiar, open interface. Unity Catalog lets organizations manage fine-grained data permissions using standard ANSI SQL or a simple UI, enabling them to safely open their lakehouse for broad internal consumption. It works uniformly across clouds and data types. Finally, it goes beyond managing tables to govern other types of data assets, such as machine learning (ML) models and files. Thus, enterprises get a simple way to govern all their data and artificial intelligence (AI) assets. The Lakehouse for FS Blueprints will be updated to incorporate Unity Catalog when it is generally available.

Financial services quickstarts

We often hear that data teams and data leaders need to deliver value in weeks, not months or years. Data teams will often spend many weeks understanding the problem, before acquiring, integrating, and transforming the data. Only then can the data team begin developing, optimizing, and deploying models into production. This lag from identifying the need, working through potential solutions, finalizing an implementation, and seeing results takes away momentum from even the most important data science initiatives.

To help our customers overcome these challenges, Databricks created Python libraries, which help accelerate use cases in Financial Services. As part of the Lakehouse for FS Blueprints, we've pre-installed these libraries on a standard cluster, starting with two quickstarts to help enterprises get up to speed with best practices:

- Waterbear: Waterbear can interpret enterprise wide data models (such as regulatory reporting) and pre-provision tables, processes and data quality rules that accelerate the ingestion of production data and development of production workflows. This allows FSIs to deploy their Lakehouse for Financial Services with resilient data pipelines and minimum development overhead. For more information, read this blog.

- Tempo: Tempo is a set of time-series utilities to make time-series processing simpler on Databricks. By combining the versatile nature of tick data, reliable data pipelines and Tempo, FSIs can unlock exponential value from a variety of use cases at minimal costs and fast execution cycles. For more information, read this blog.

This is only the beginning. As we continue to build out our portfolio and create standardized libraries for our existing Solution Accelerators, we will be adding new libraries or preconfigured clusters into the Lakehouse for FS Blueprints to provide an ever growing set of key capabilities for our FS customers.

Key benefits of Lakehouse for FS Blueprints

Built for Financial Services

Lakehouse for FS Blueprints are designed specifically to support the compliance and security needs in Financial Services. Best practices are implemented out of the box — including key security and governance controls — based on best practices and patterns that we've seen across 600+ customers. You have a starting point that you can build upon to configure additional policies as required.

Save time and resources through automation

With the Lakehouse for FS Blueprints, you don't need to spend lots of time configuring Databricks. Instead, build upon an open source deployment framework and focus on the customizations that are unique to your company. Developers can move faster, and data migrations are less time consuming. You can now be up and running within a matter of minutes, instead of weeks or months.

Acceleration to value

Preconfigured clusters simplify and accelerate the deployment of core FS use cases to allow you and your business stakeholders to get to value faster. These libraries reduce your time spent in a variety of areas including data engineering, schema development, and model development. To address even more use cases, check out all of the Databricks Solution Accelerators, where you can easily download and import into your workspace.

Multi-cloud

Multi-cloud adoption is gaining momentum, and Gartner predicts that by 2022, 75% of enterprise customers using cloud infrastructure as a service (IaaS) will adopt a deliberate multi-cloud strategy. With that in mind, Databricks has created the Lakehouse for FS Blueprints for each major public cloud — AWS, Azure and GCP. You can avoid duplication of best practices across clouds and better ensure consistent deployment of the lakehouse across your clouds.

Getting Started

The Terraform modules can be downloaded from the Databricks GitHub repository under the project for Lakehouse for FS Blueprints, and a more detailed markdown of the Terraform provider is available.

We've also included an instructional video that provides a step-by-step tutorial for deploying the Lakehouse for FS in a matter of minutes.

Lakehouse for FS Blueprints will continue to evolve as we incorporate new solution accelerators and Databricks capabilities as they become Generally Available, such as Unity Catalog for Governance and Delta Sharing. Stay tuned for related blog posts in the future, and the launch of new blueprints covering additional Industries.

Read more

- Lakehouse for Financial Services

- Lakehouse for Financial Services: Data-Driven Innovation in FSIs

- Modernizing Investment Data Platforms - Tempo

- Regulatory Reporting

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.