The Best Data Warehouse Is a Lakehouse

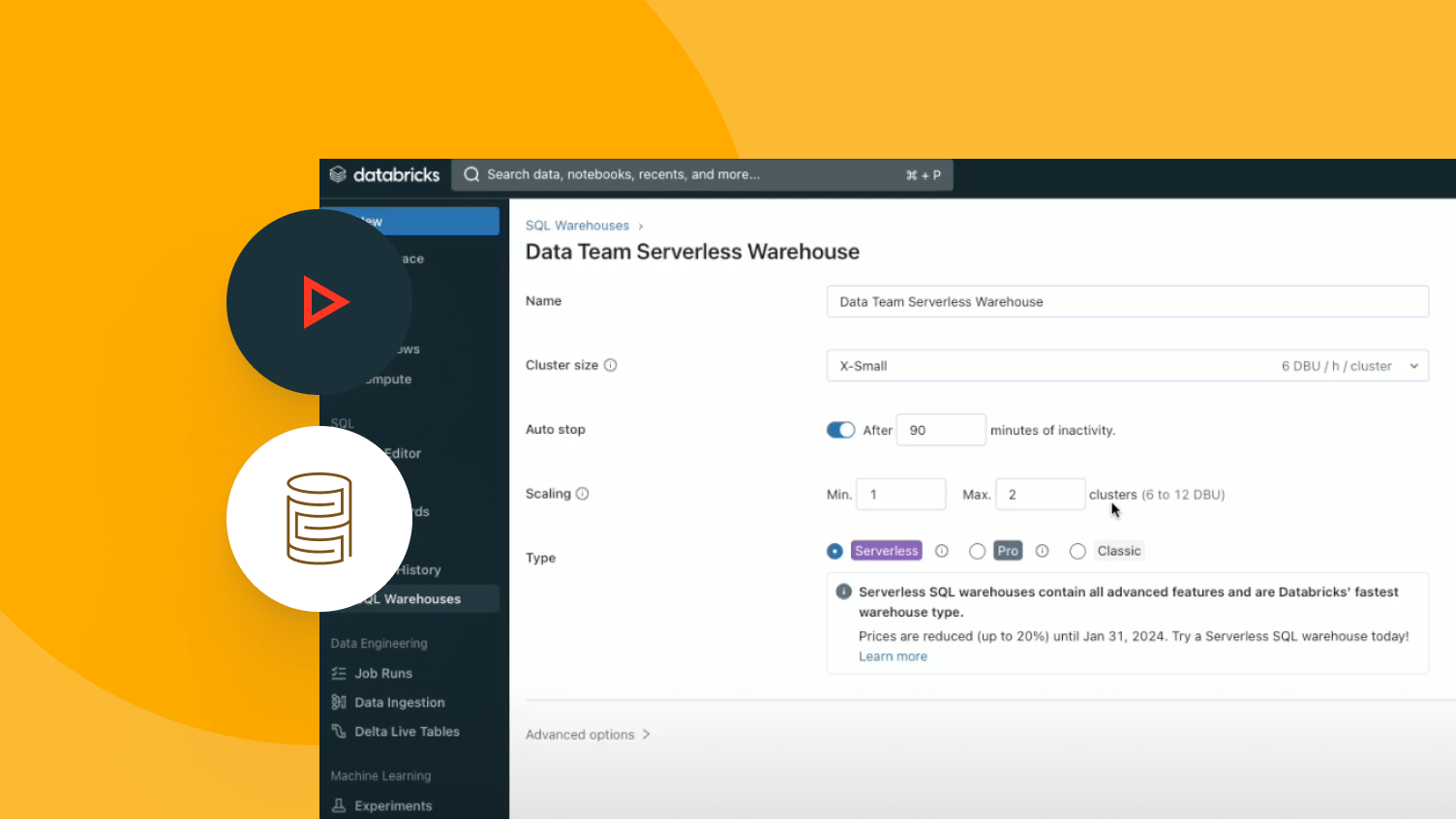

Eliminate legacy warehouse costs and lower TCO with a serverless intelligent data warehouse

TOP TEAMS SUCCEED WITH AN INTELLIGENT DATA WAREHOUSEAI-powered performance and simplicity

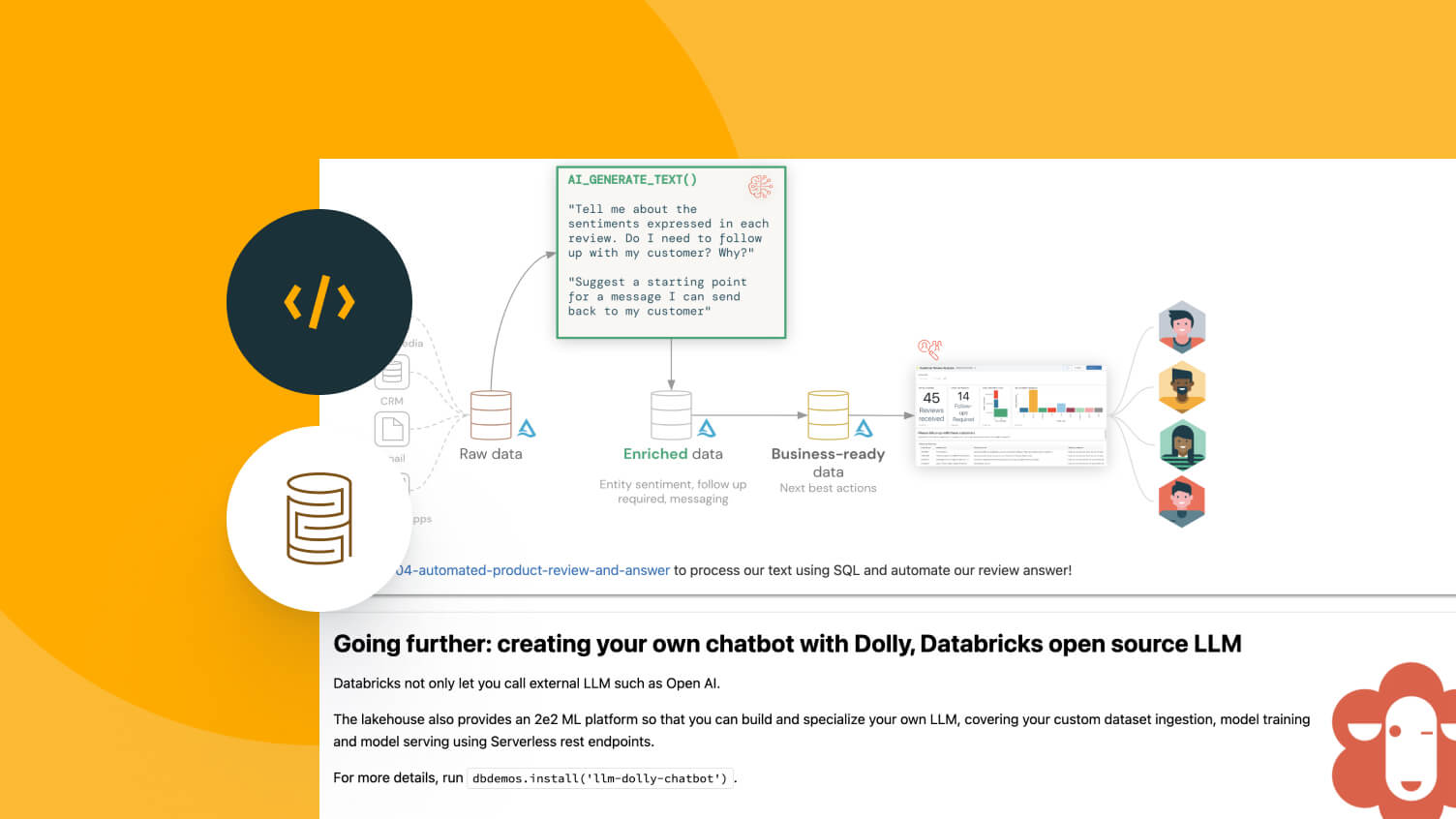

Eliminate the cost and complexity of legacy data warehouses with Databricks SQL — a serverless data warehouse built on lakehouse architecture that natively integrates AI.AI-powered lakehouse

Less tuning your data warehouses, more answers from your data. All with market-leading price/performance.Democratize analytics and insights across your organization. Accurately answer your data questions, because AI/BI understands your data and continuously improves.

More features

An open, unified approach to your data, BI and AI workloads

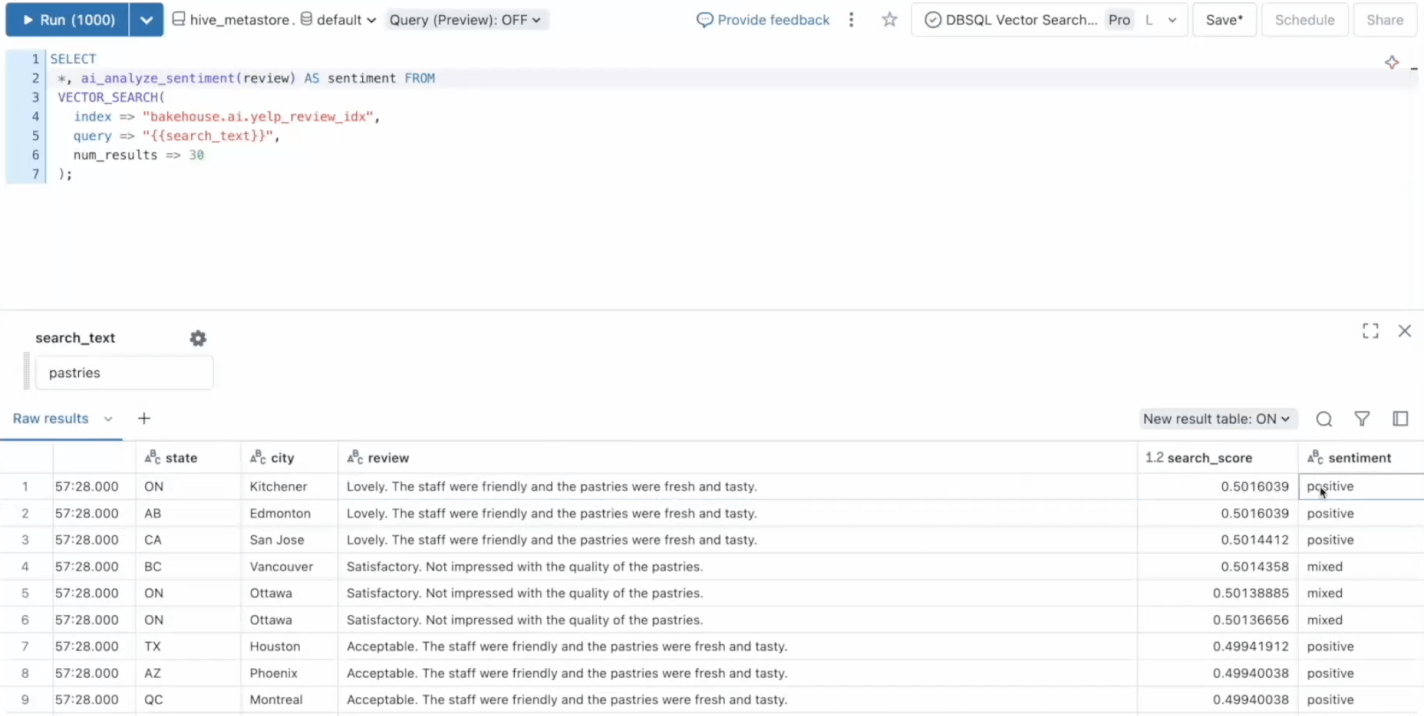

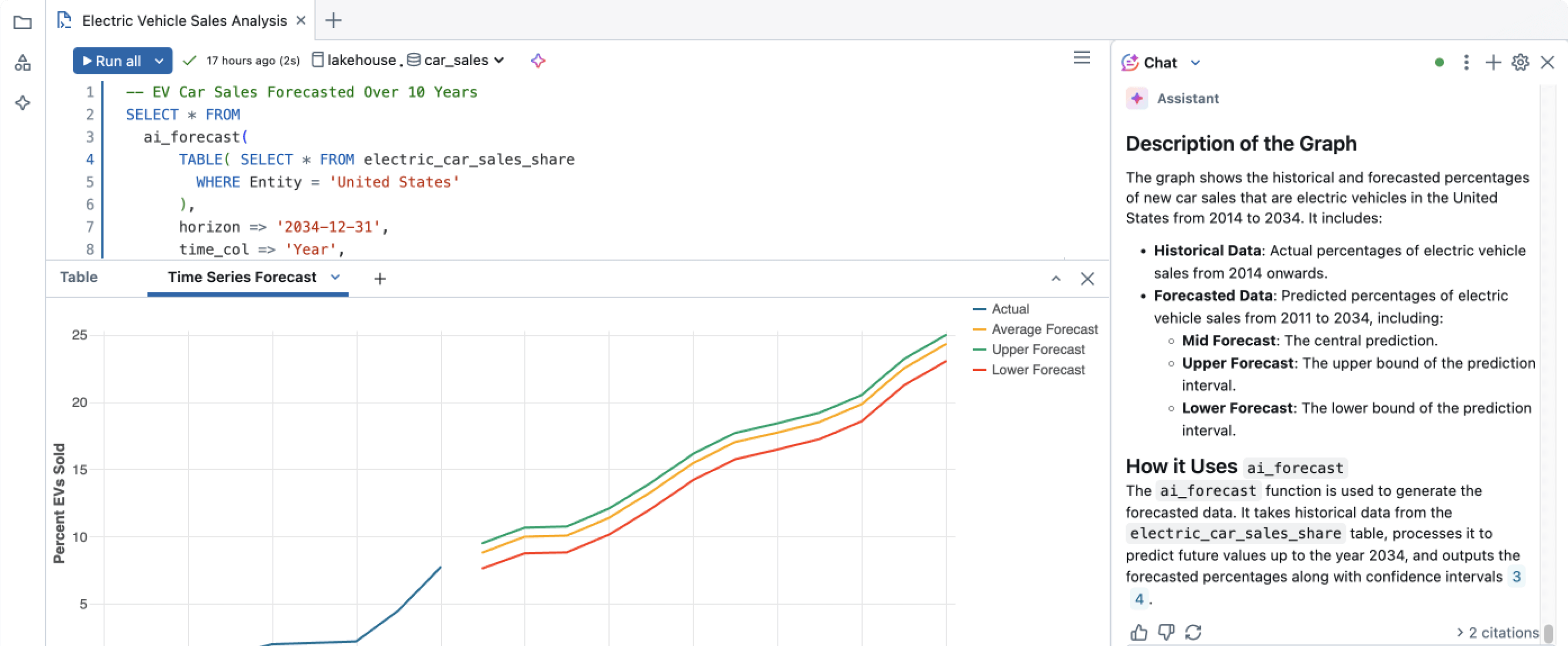

Empower anyone to extract insights with SQL queries

Empower data analysts and business users to answer questions independently while freeing up data professionals for more complex tasks

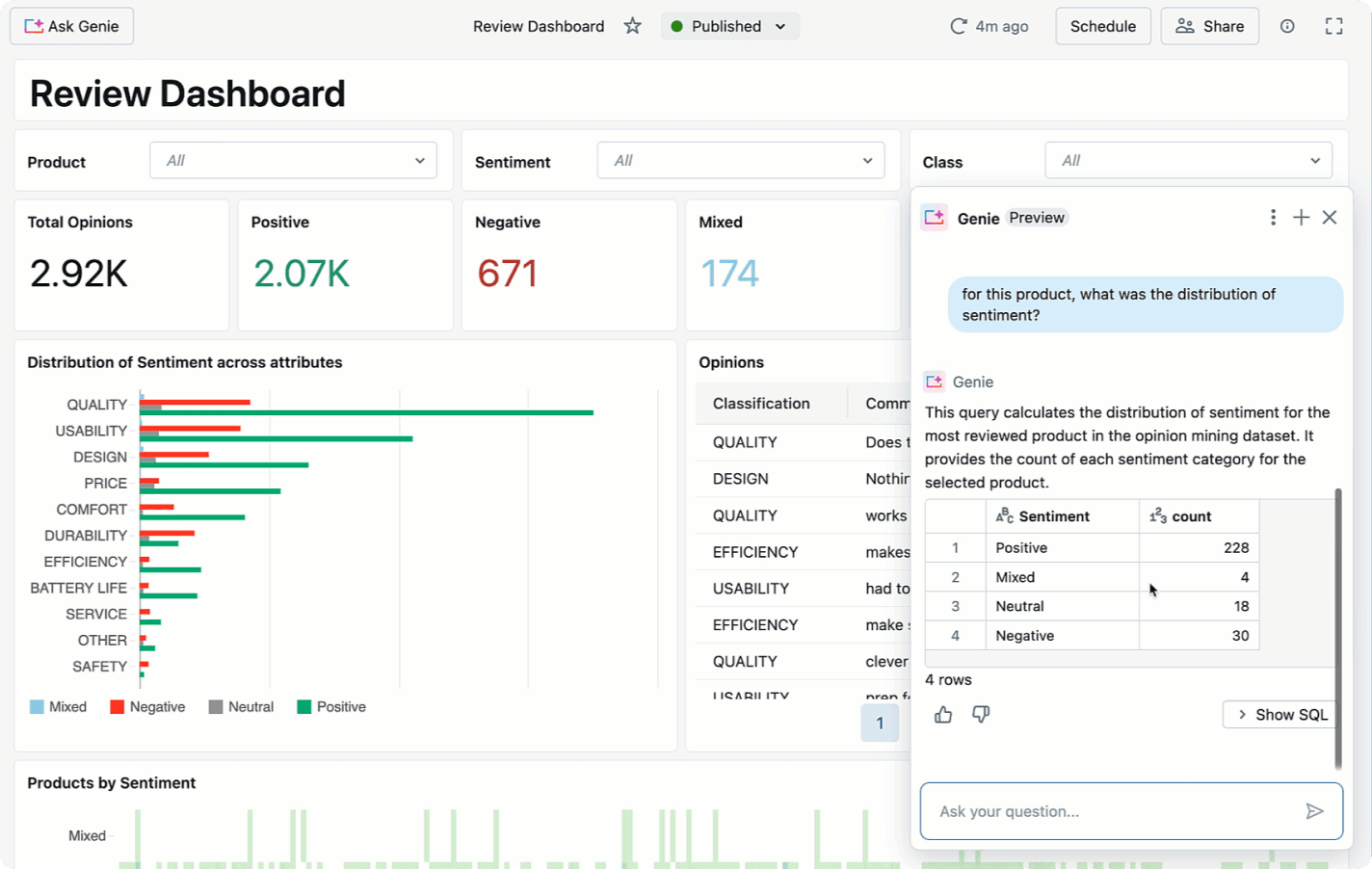

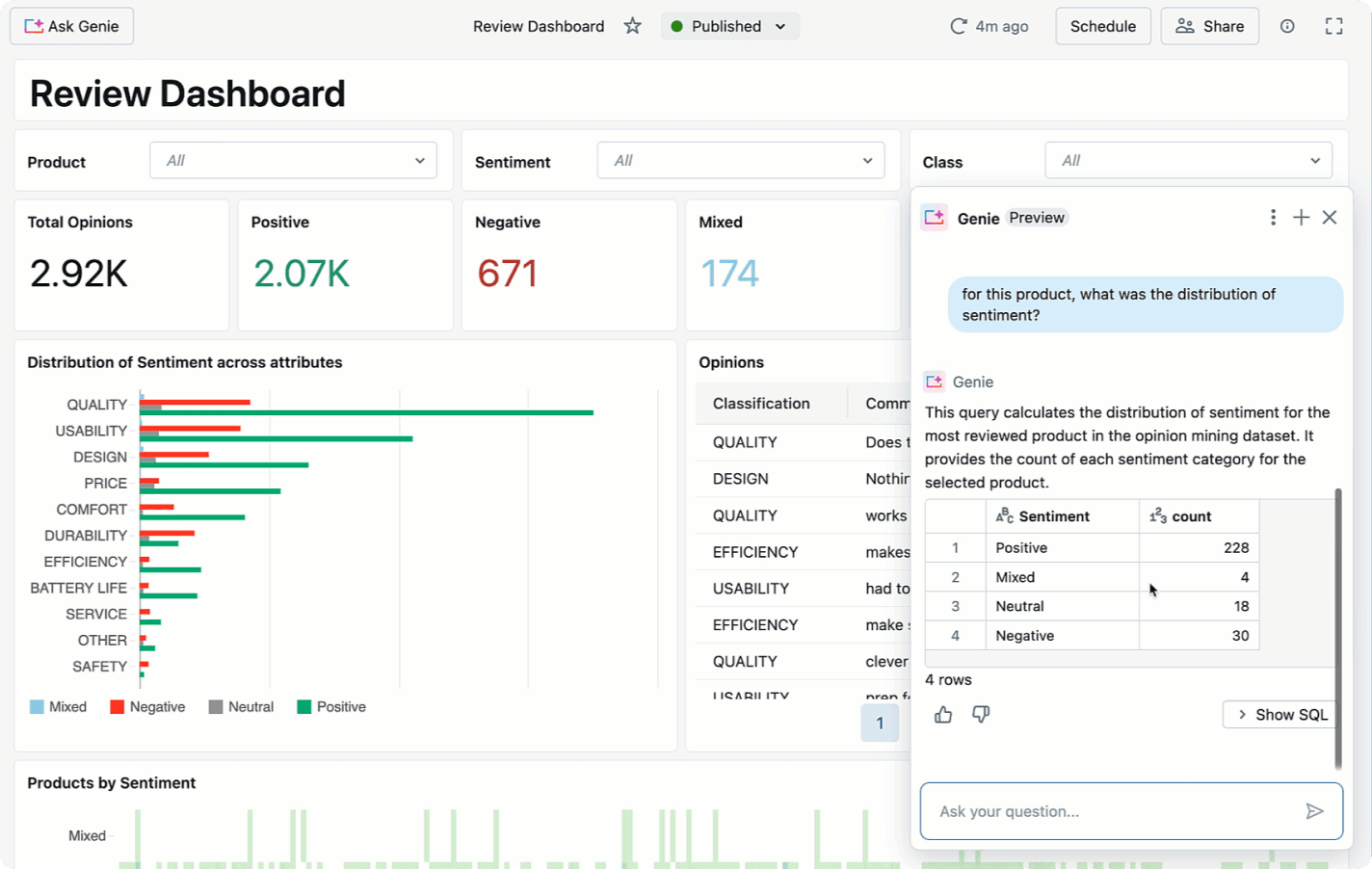

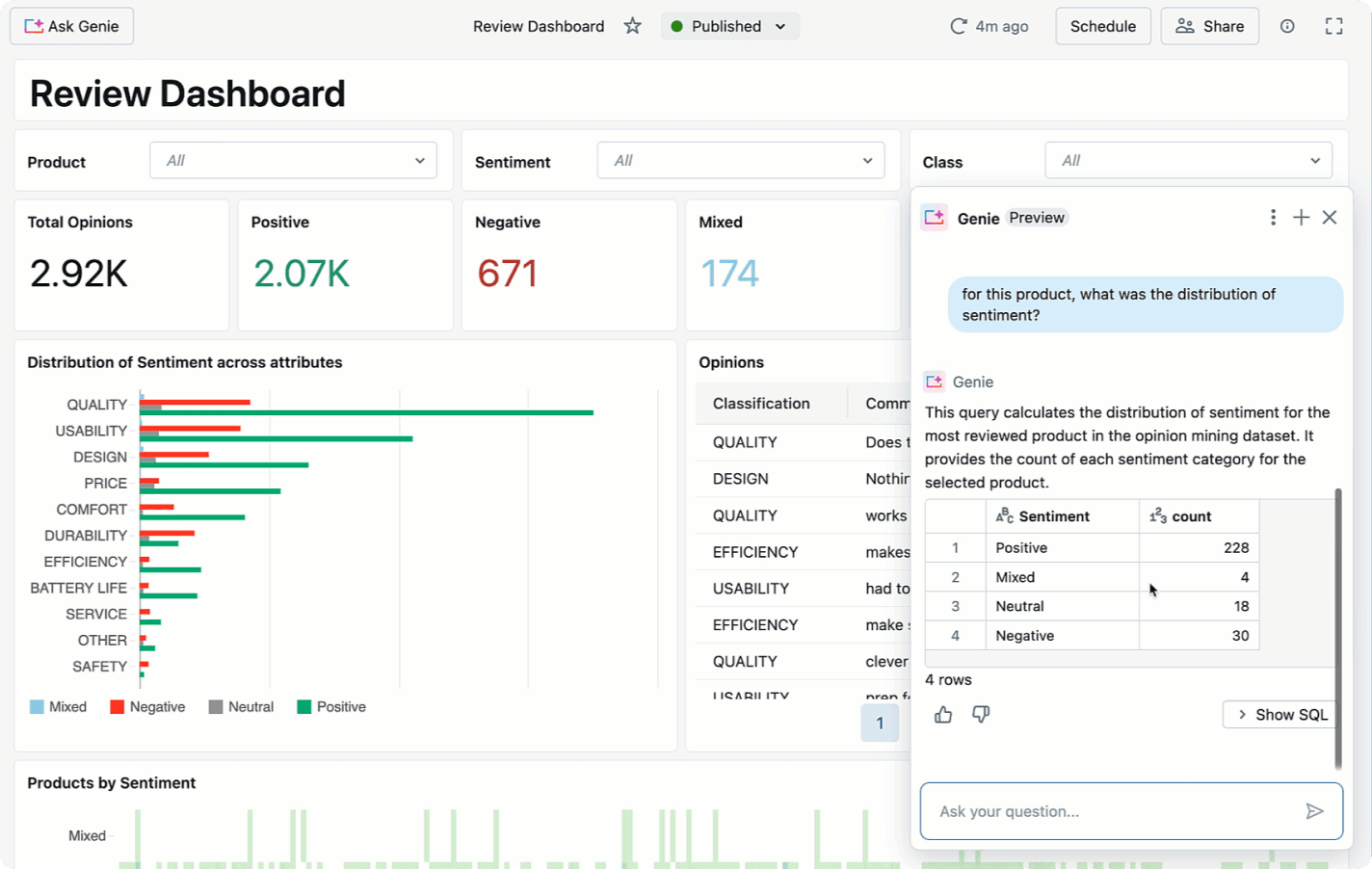

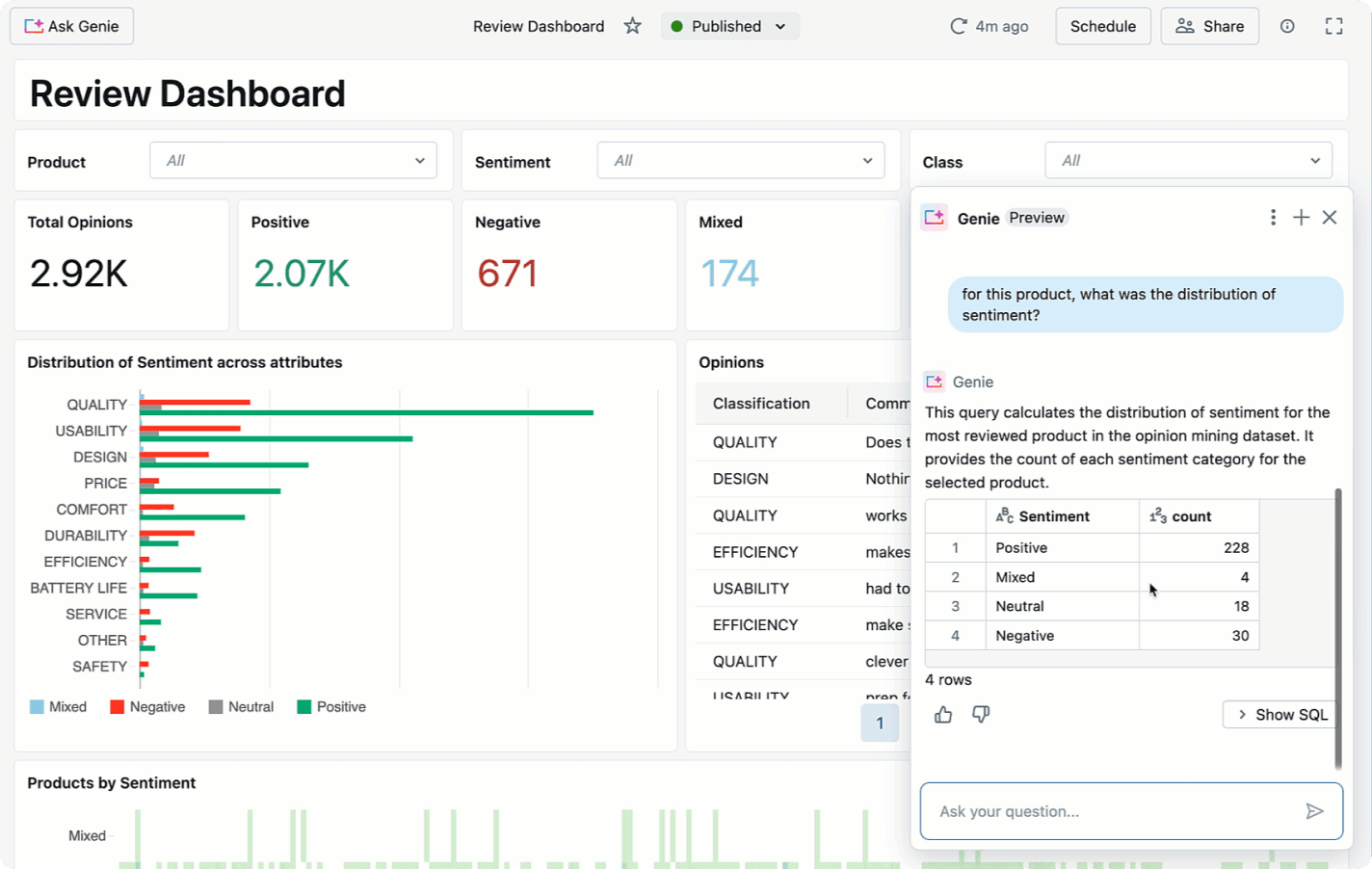

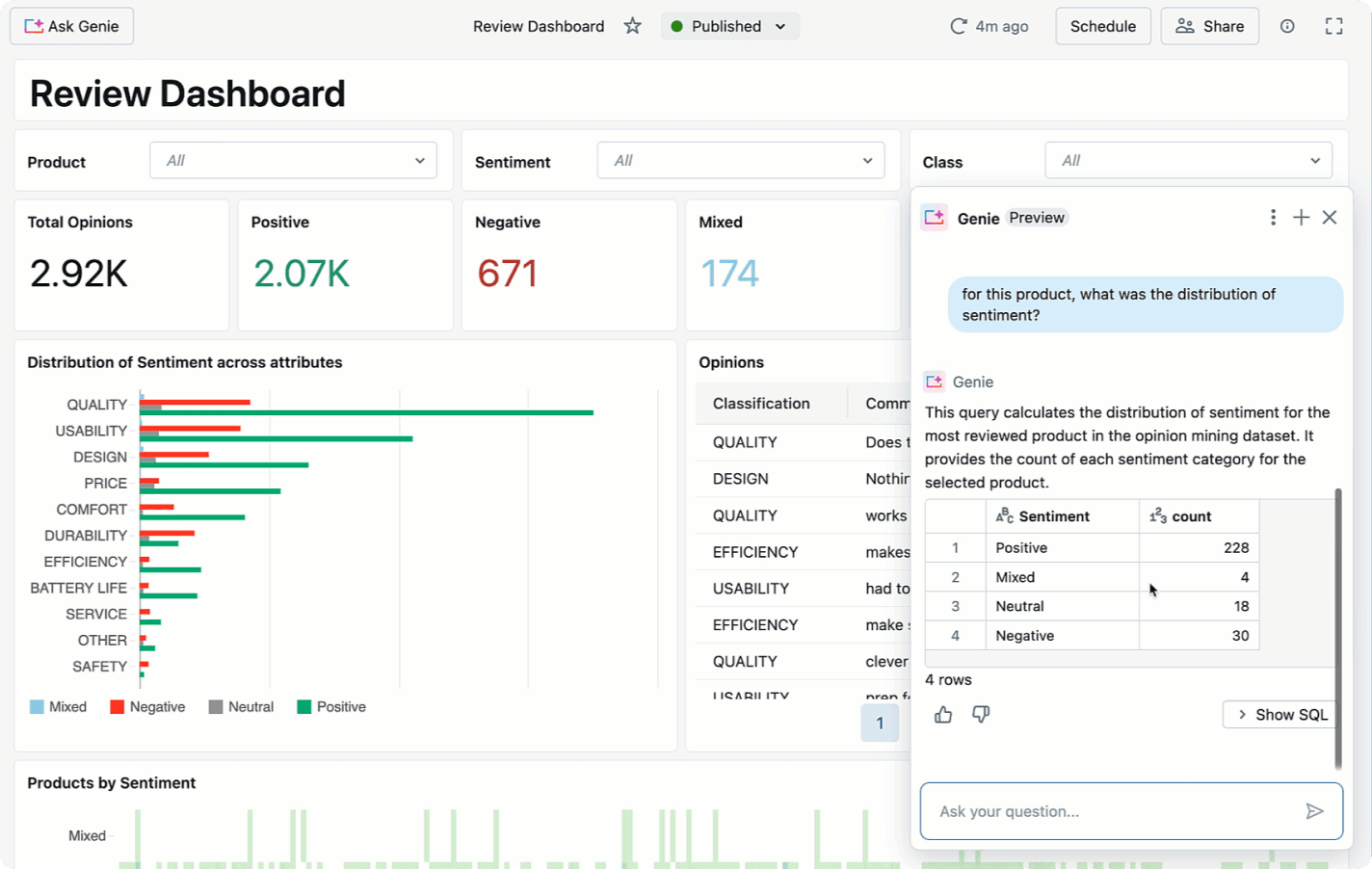

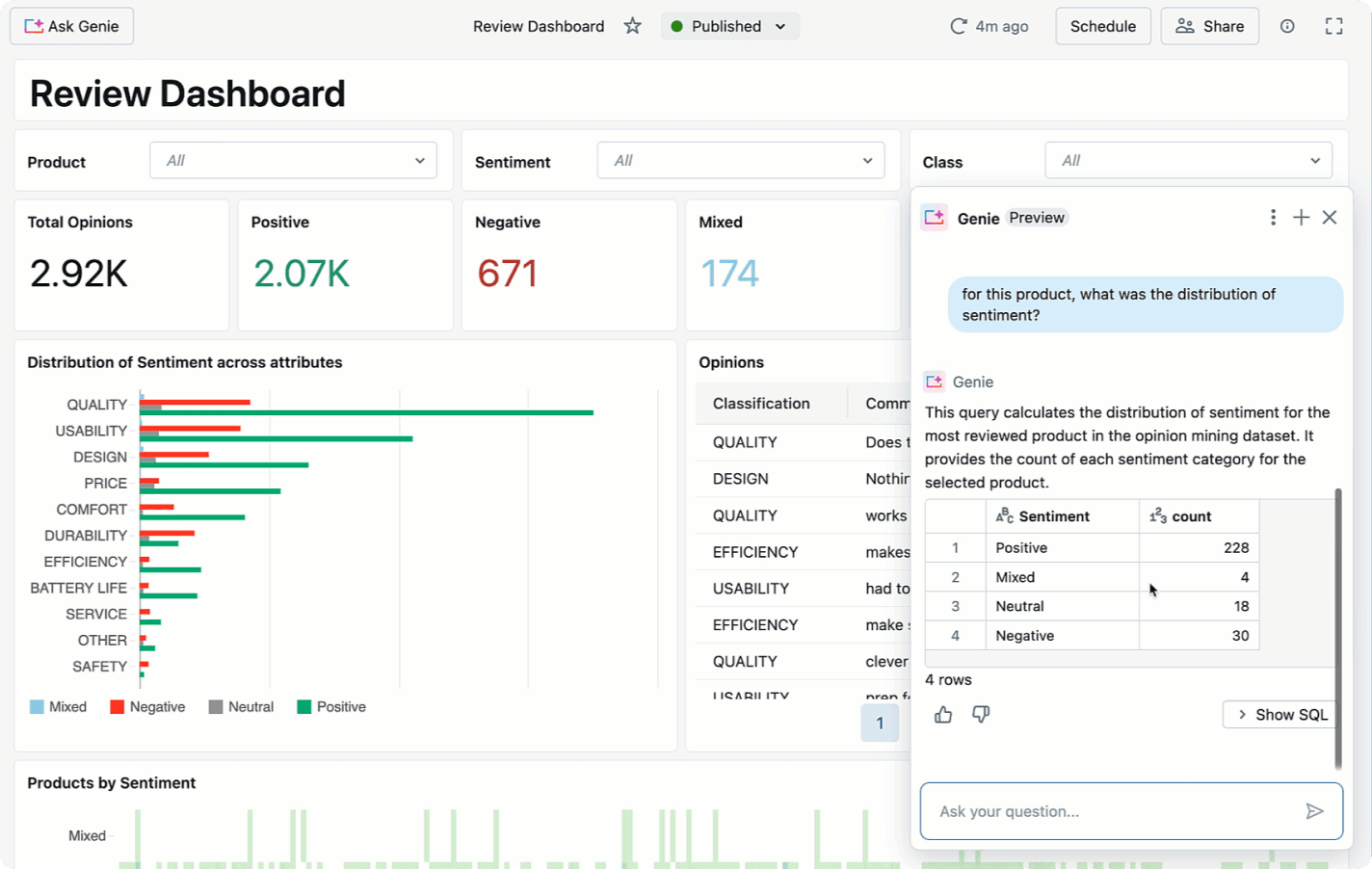

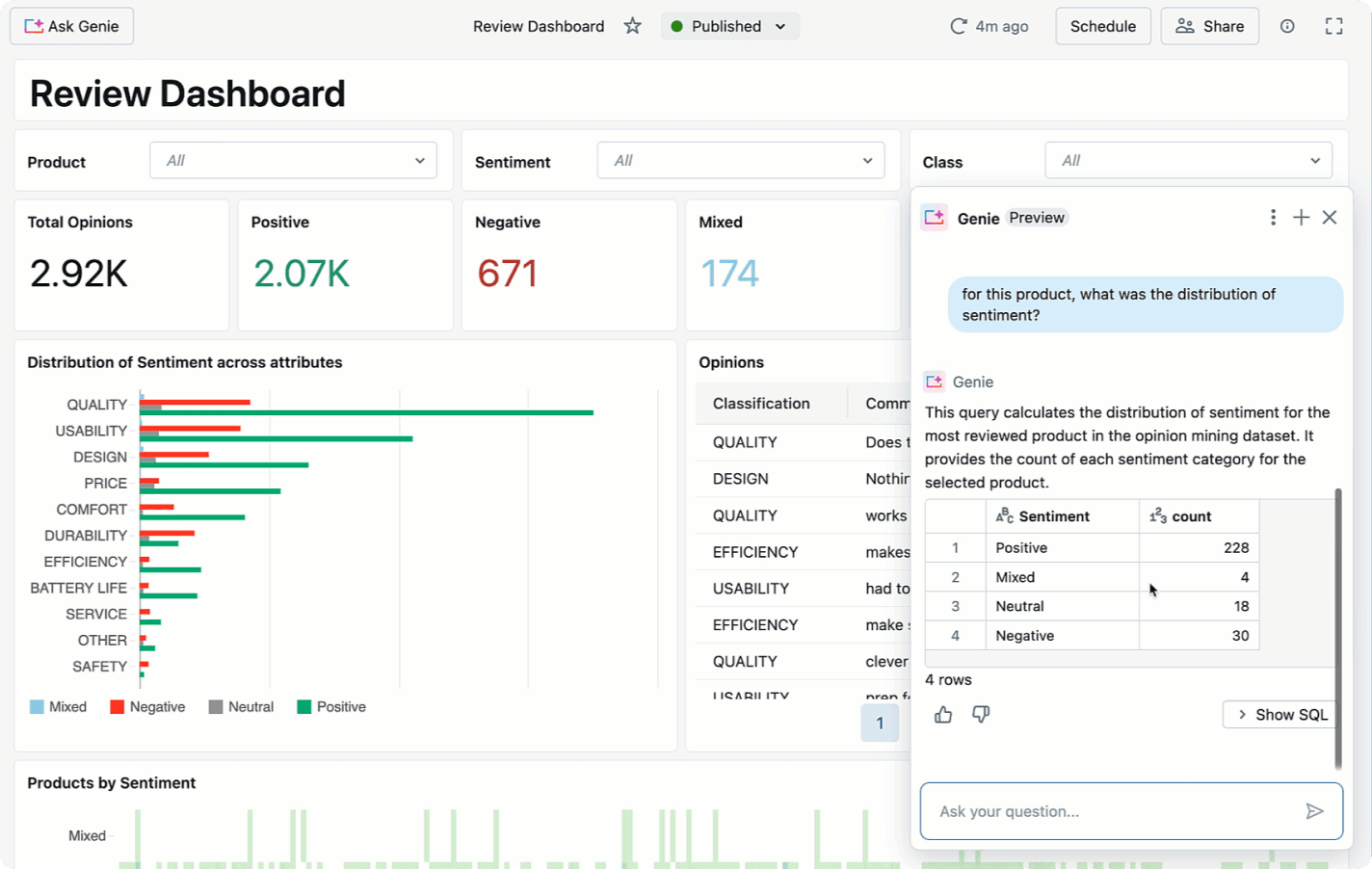

- AI-powered dashboards

- Conversational interfaces to chat with your data

- An evolving system that gets smarter every time you use it

Explore Databricks SQL Demos

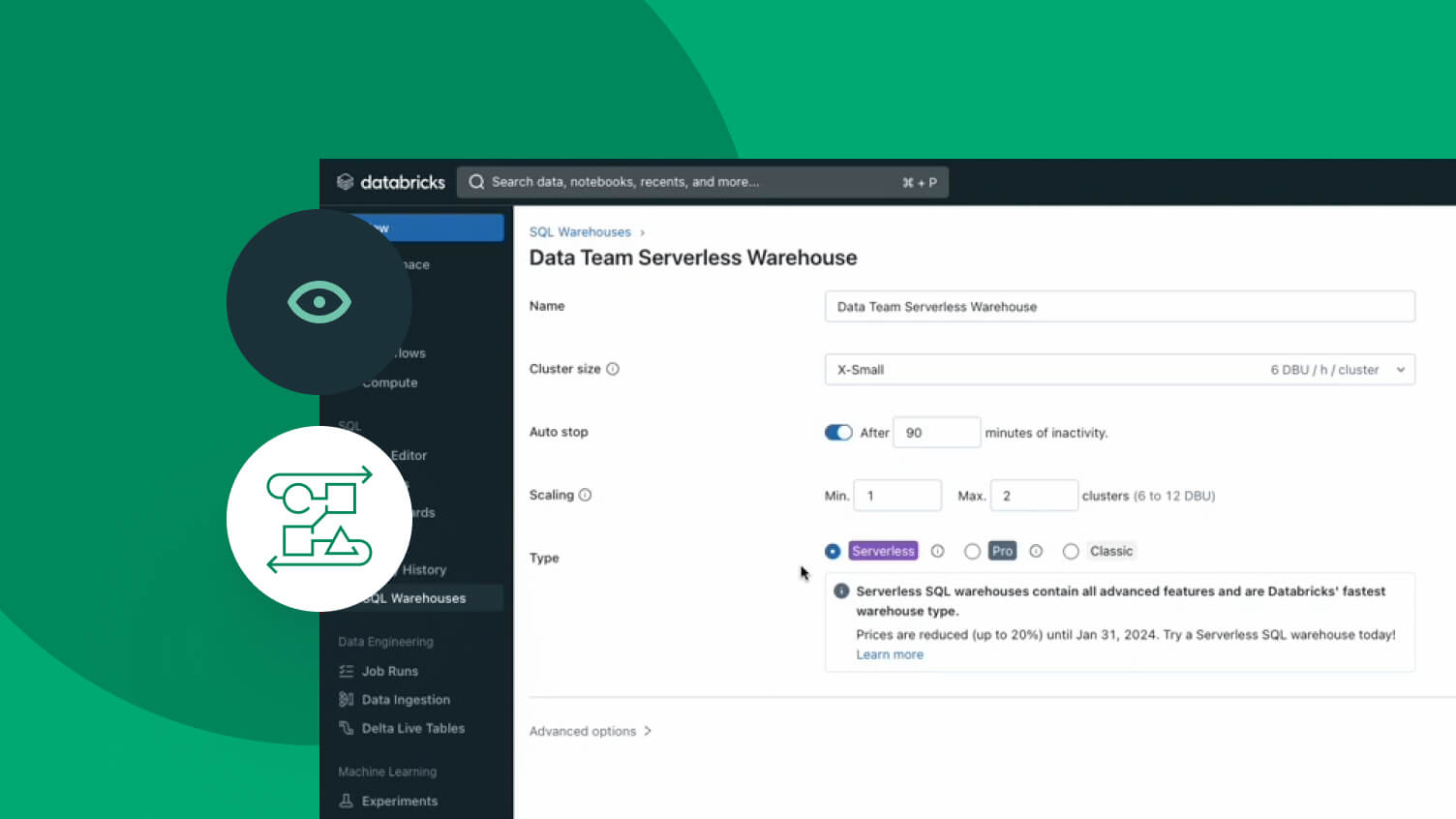

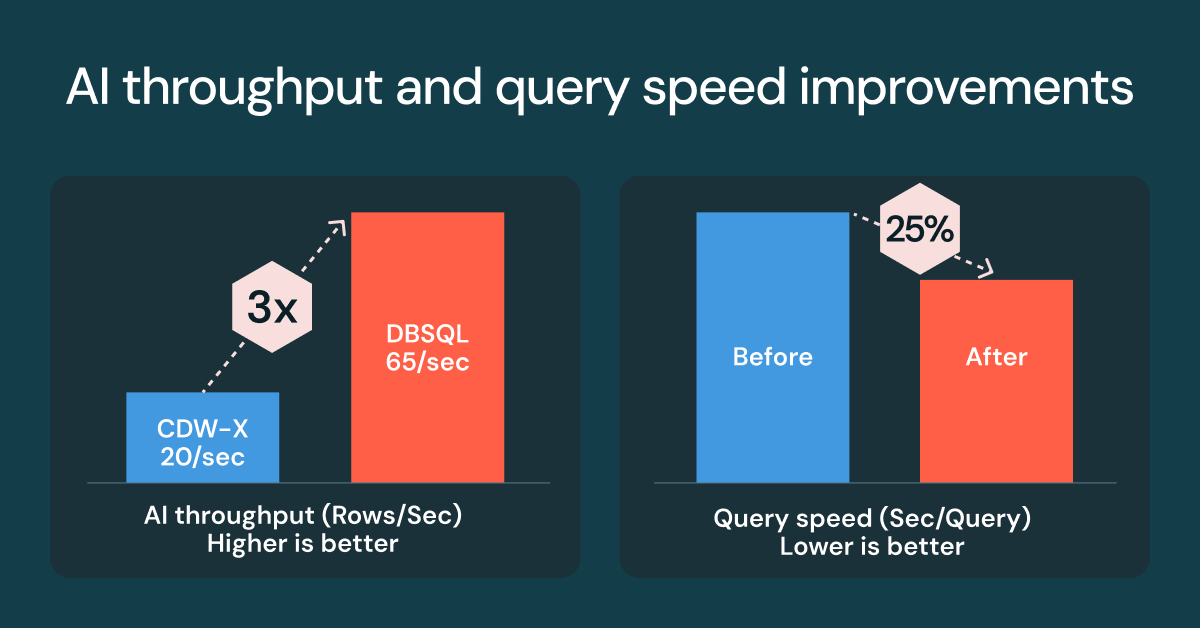

AI-optimized performance

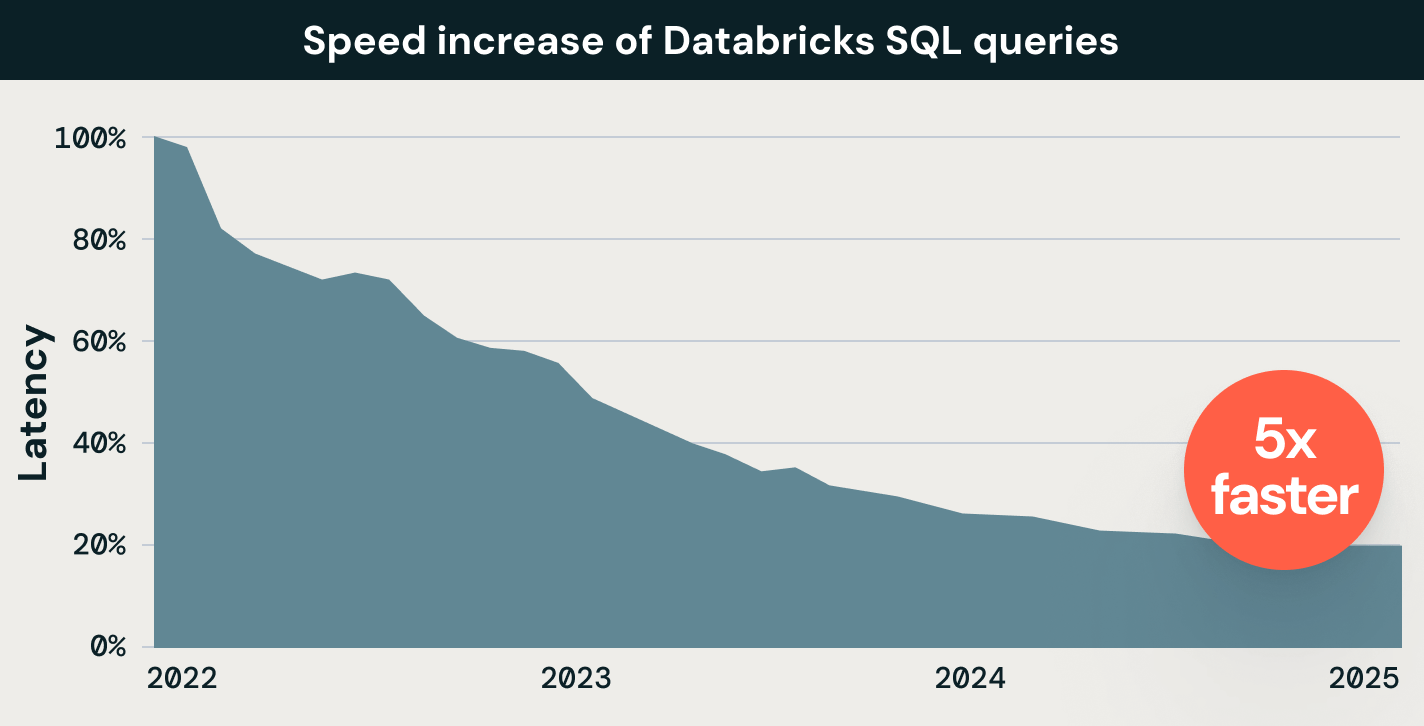

Databricks SQL uses AI systems throughout the platform that analyze your workloads and automatically improve efficiency and performance. Customer queries are already 5x faster than three years ago.

- 9x better ETL price/performance

- The Photon query engine for extremely fast query performance at low cost

- Process large numbers of queries quickly and cost-effectively with intelligent workload management

- Automatically rewrite data files to improve data layout

- Maintain both high performance and high BI query concurrency

- 4x improvement on throughput using ai_query()

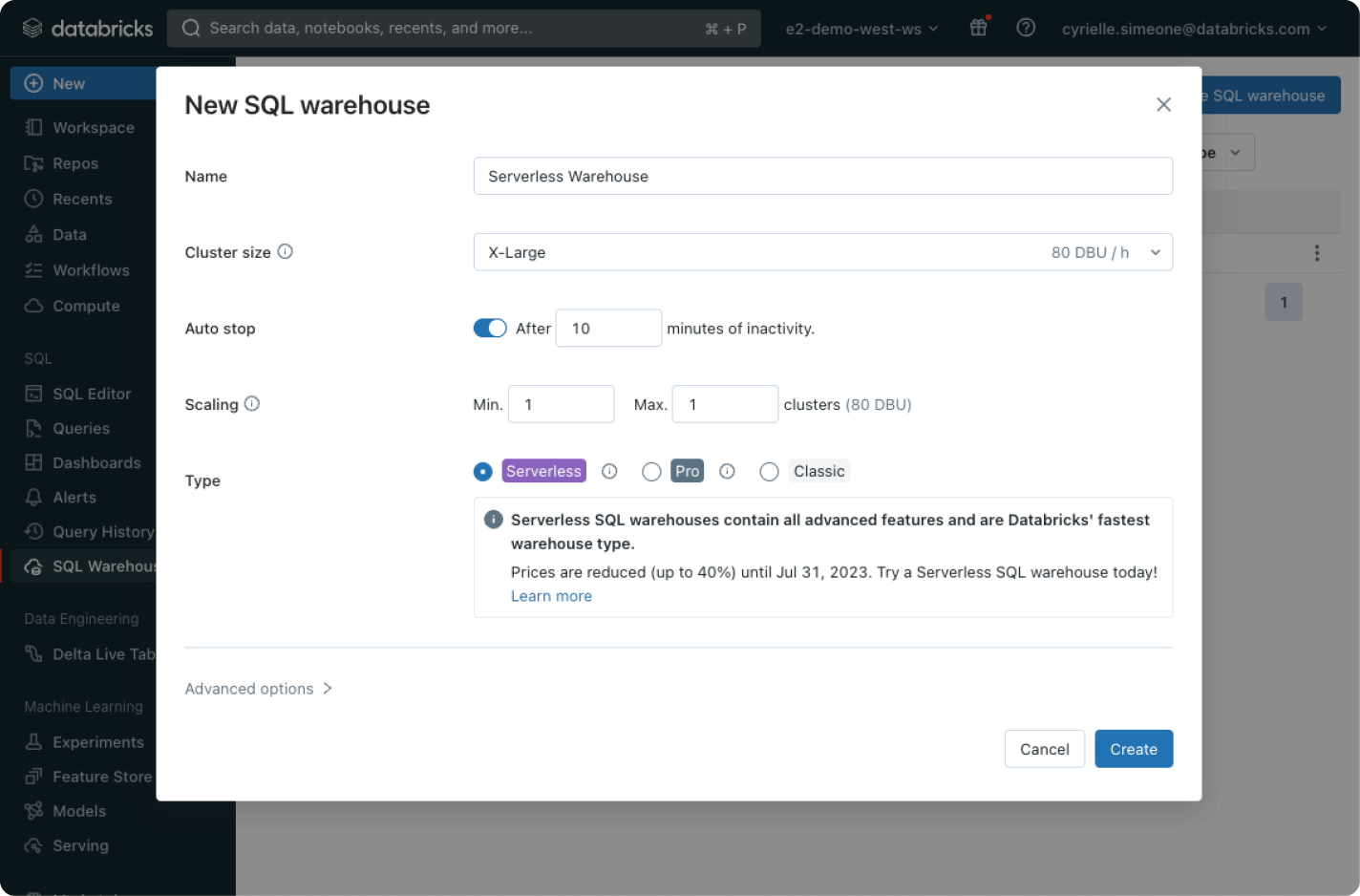

Usage-based pricing keeps

spending in check

Only pay for the products you use at per-second granularity.Discover more

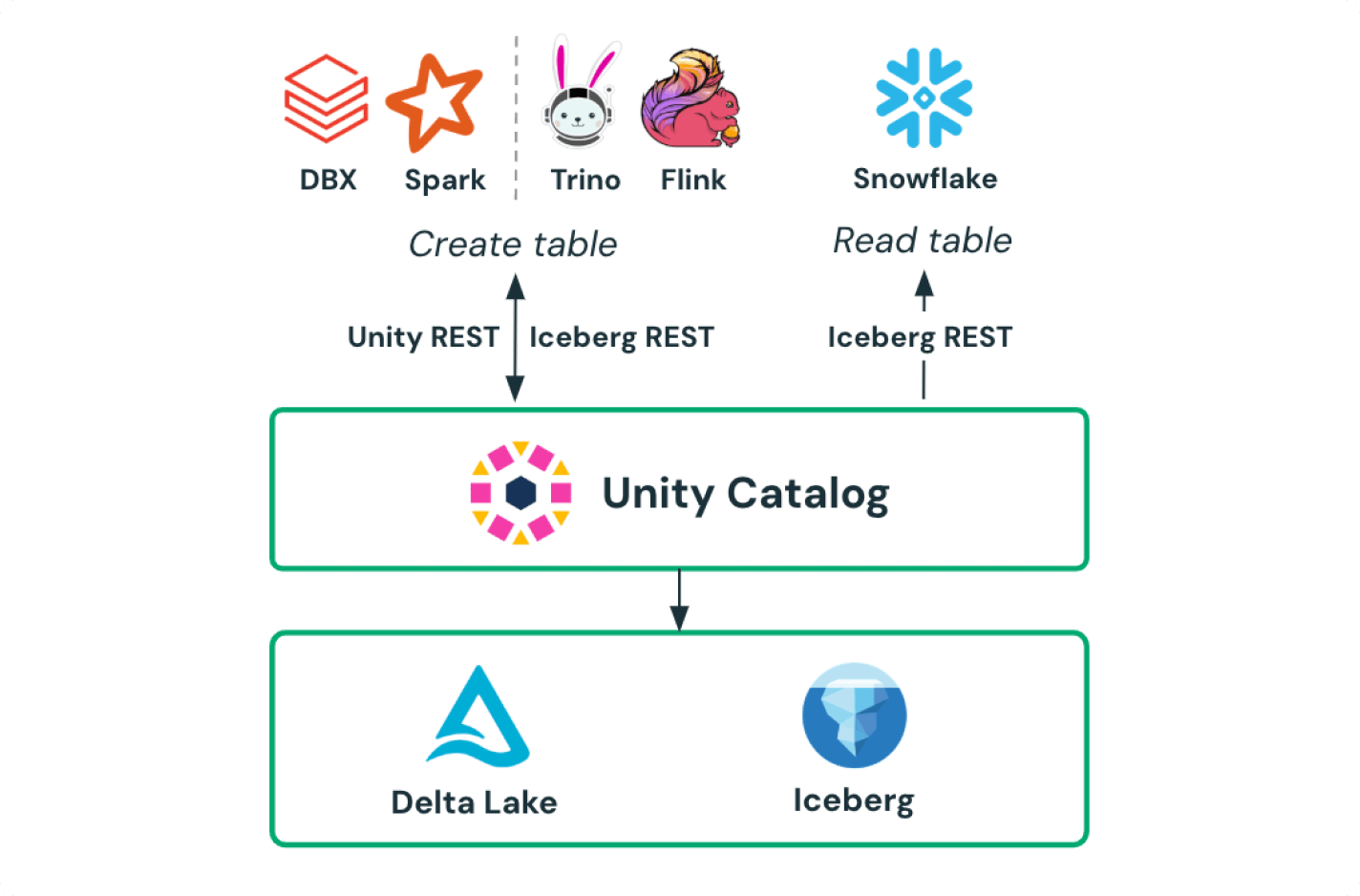

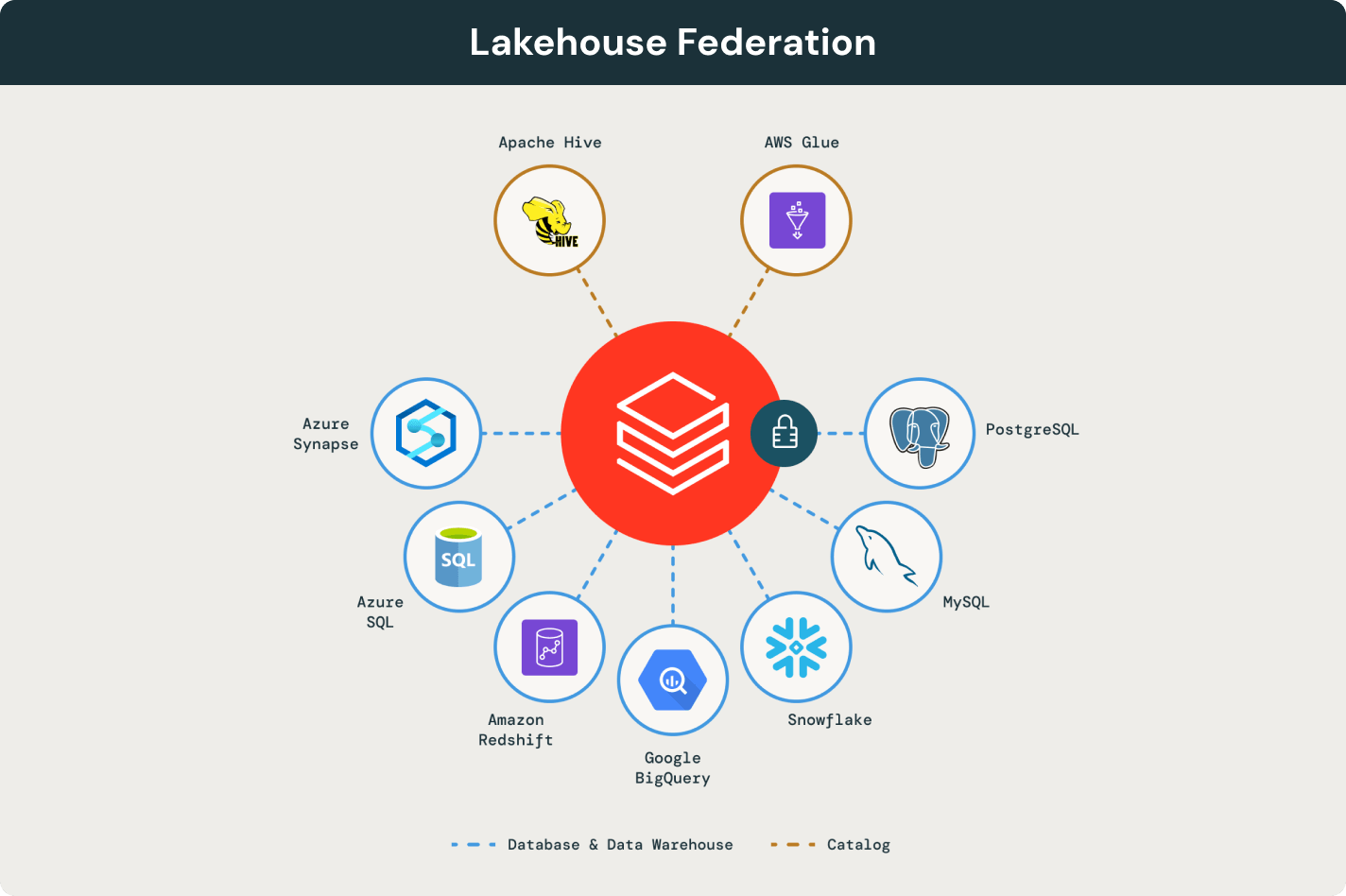

Learn more about the integrated features of Databricks SQL.Unity Catalog

Unified governance and security to centrally manage assets and access with integrated Unity Catalog.

AI/BI

Dashboards provide a low-code experience for analysts. Genie helps business users ask questions of their data and self-serve their own analytics.

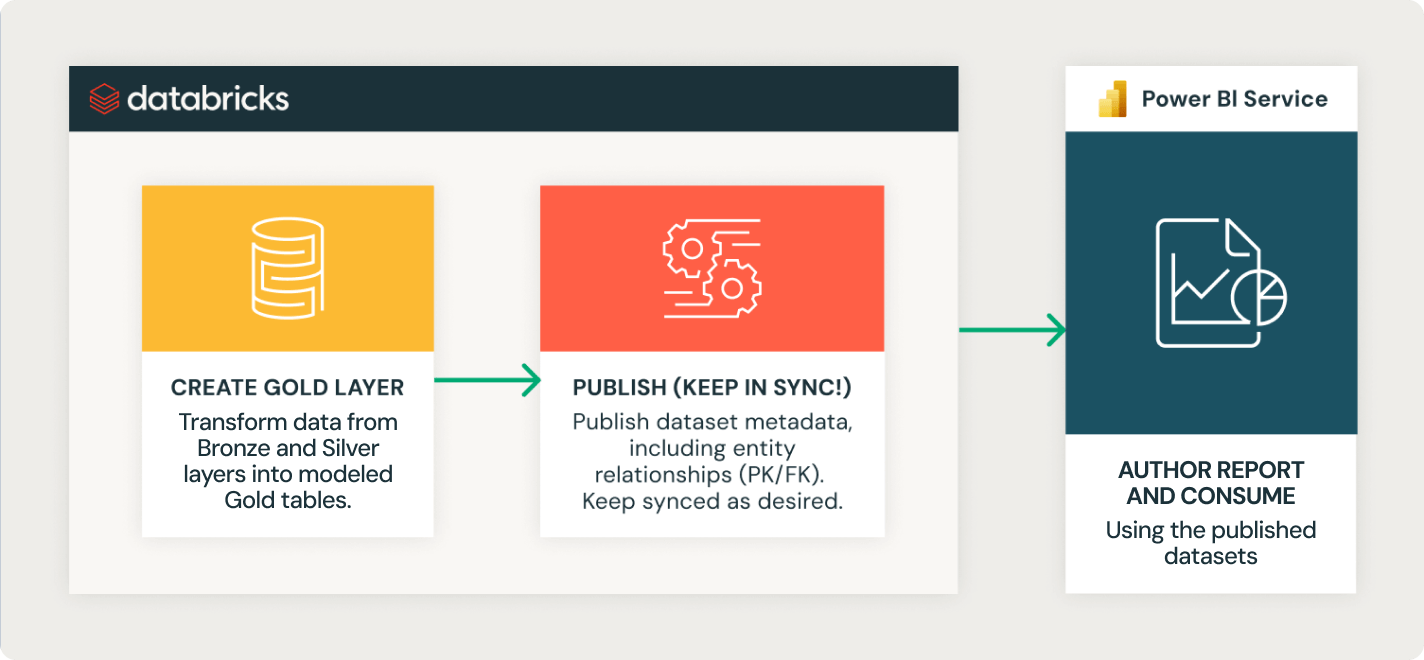

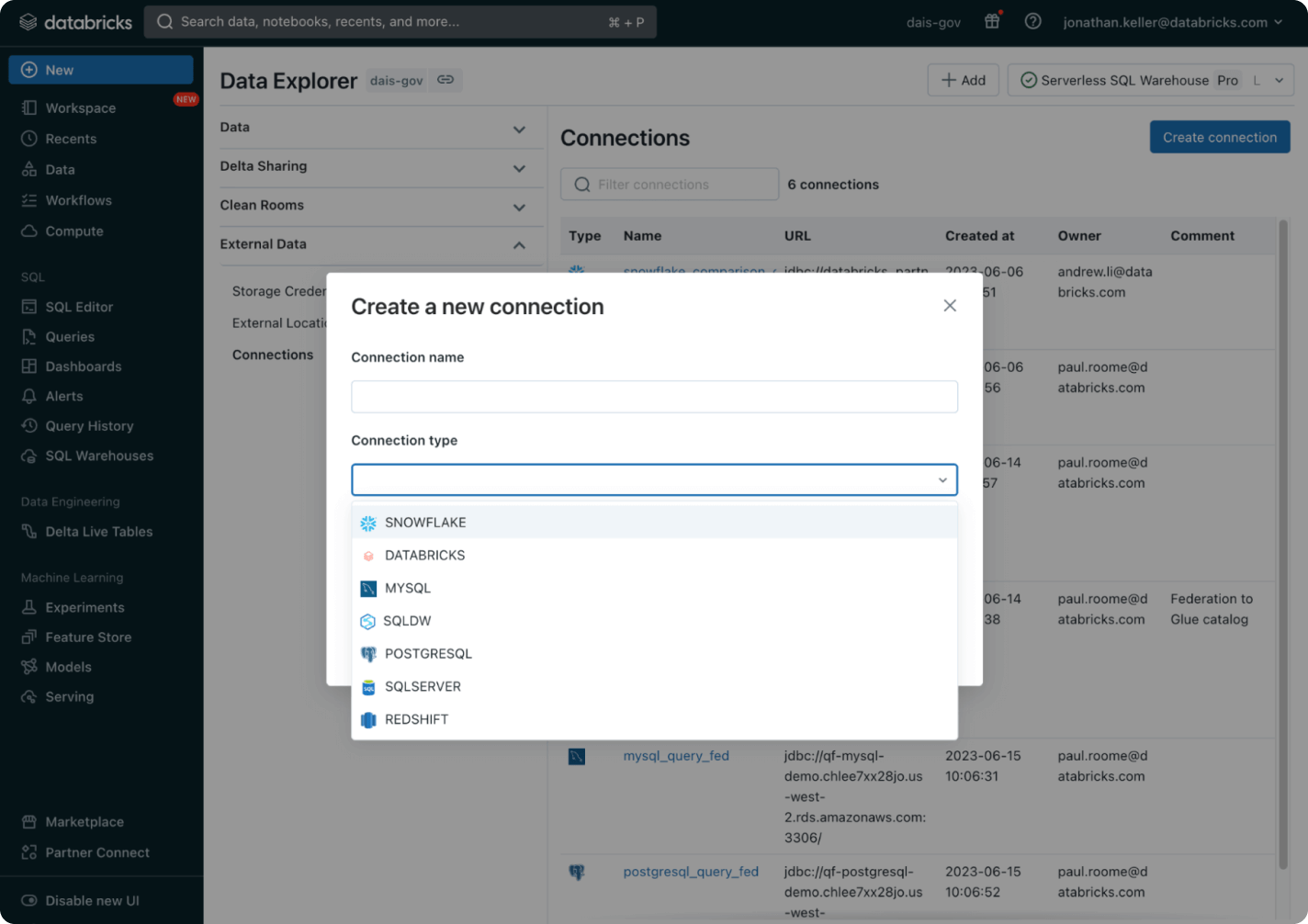

Delta Sharing

The open source approach of Delta Sharing lets you share live data across platforms, clouds and regions without sacrificing strong security and governance.

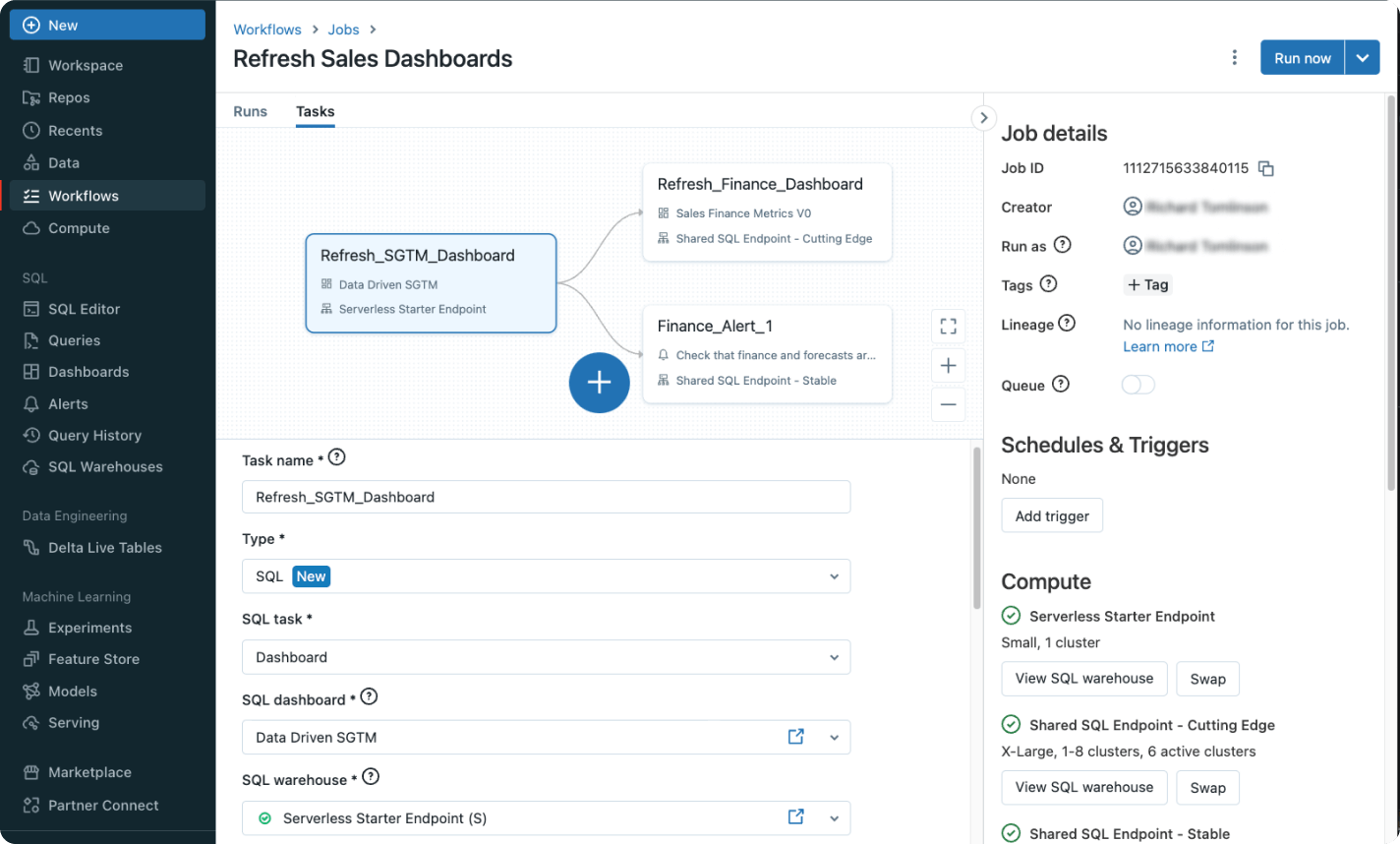

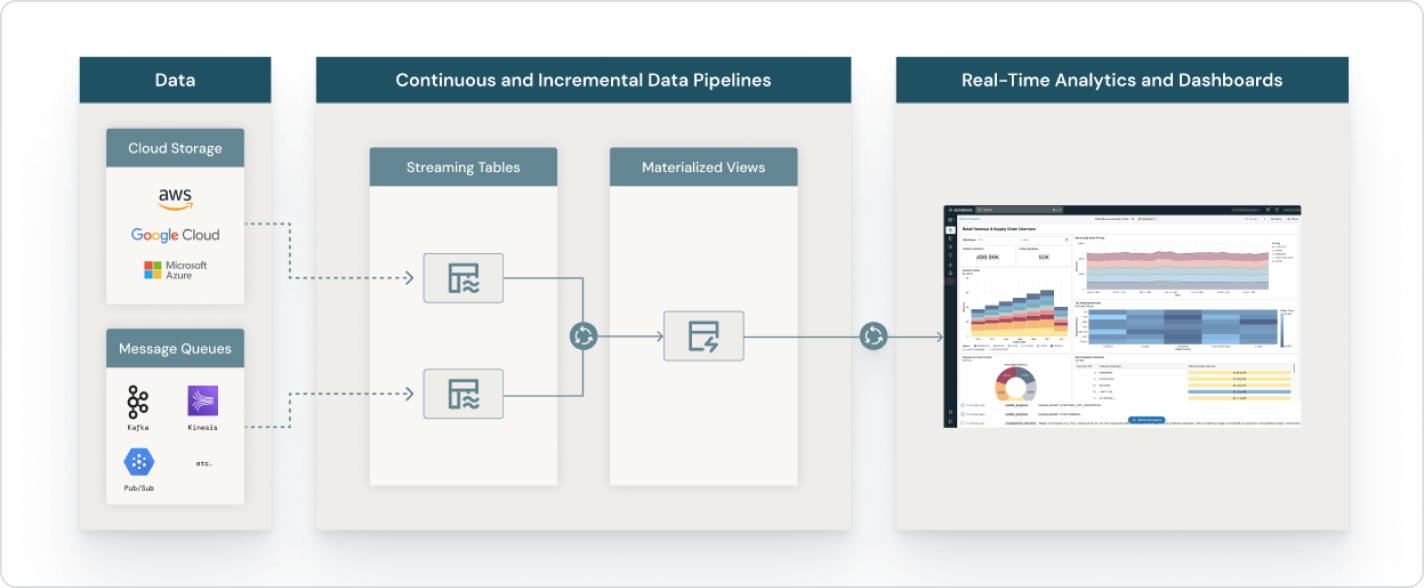

Lakeflow

Define, manage and monitor multitask workflows with Lakeflow Jobs, a managed orchestration service that’s fully integrated into Databricks SQL.

Photon

Photon is the next-generation engine built into Databricks SQL that provides extremely fast query performance at low cost.

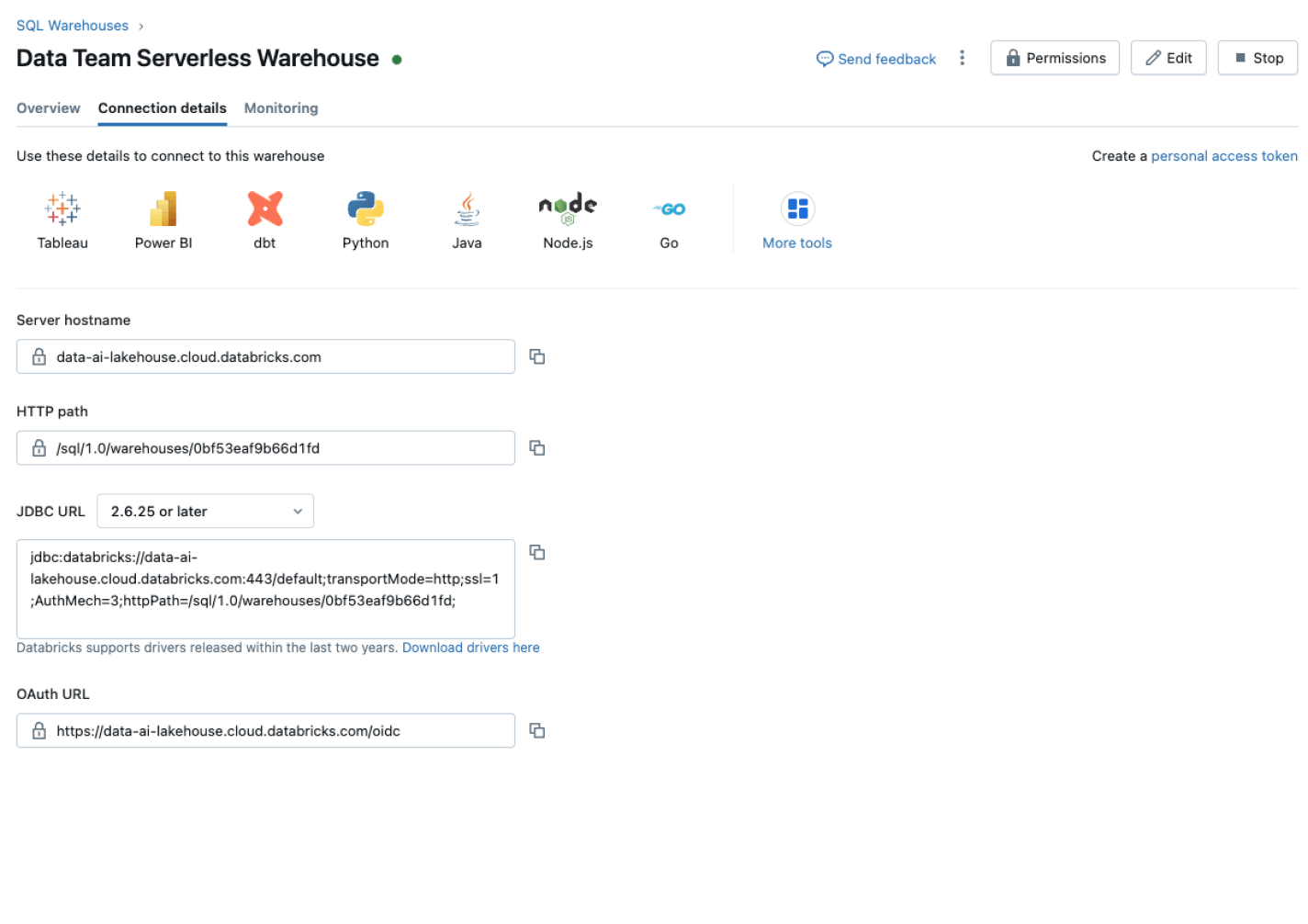

The Databricks Data Intelligence Platform

Explore the full range of tools available on the Databricks Data Intelligence Platform to seamlessly integrate data and AI across your organization.

Take the next step

Ready to become a data + AI company?

Take the first steps in your data transformation