Announcing Terraform Databricks modules

30+ reusable Terraform modules to provision your Databricks Lakehouse platform

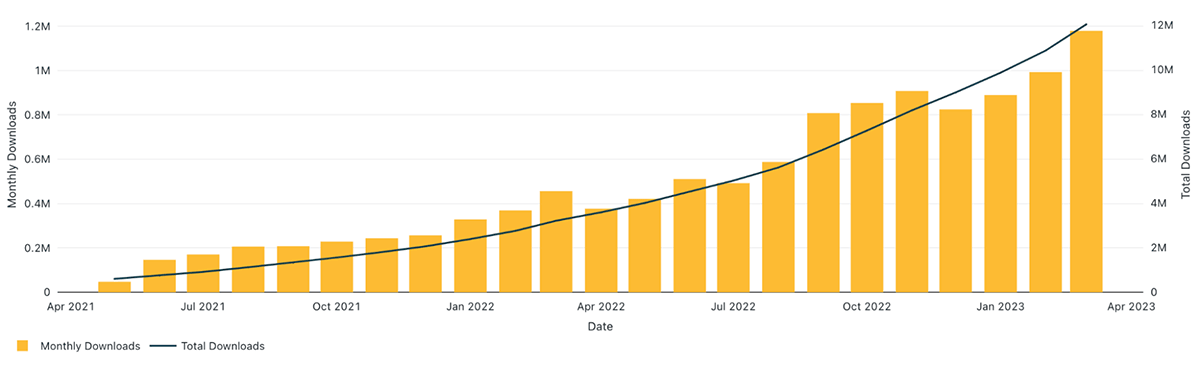

The Databricks Terraform provider reached more than 10 million installations, significantly increasing adoption since it became generally available less than one year ago.

This significant milestone showcases that Terraform and the Databricks provider are widely used by many customers to automate infrastructure deployment and management of their Lakehouse Platform.

To easily maintain, manage and scale their infrastructure, DevOps teams build their infrastructure using modular and reusable components called Terraform modules. Terraform modules allow you to easily reuse the same components across multiple use cases and environments. It also helps enforce a standardized approach of defining resources and adopting best practices across your organization. Not only does consistency ensure best practices are followed, it also helps to enforce compliant deployment and avoid accidental misconfigurations, which could lead to costly errors.

Introducing Terraform Databricks modules

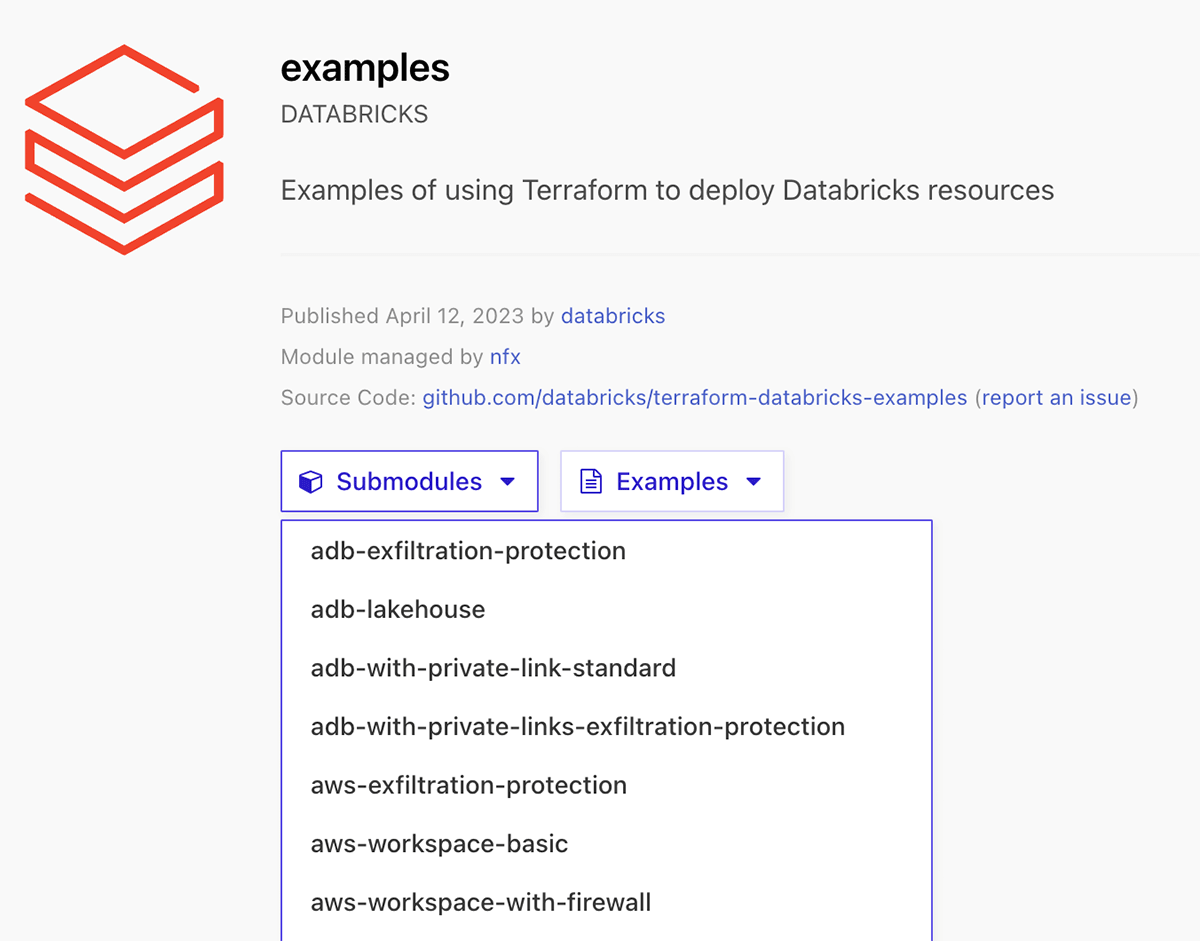

To help customers test and deploy their Lakehouse environments, we're releasing the experimental Terraform Registry modules for Databricks, a set of more than 30 reusable Terraform modules and examples to provision your Databricks Lakehouse platform on Azure, AWS, and GCP using Databricks Terraform provider. It is using the content in terraform-databricks-examples github repository.

There are two ways to use these modules:

- Use examples as a reference for your own Terraform code.

- Directly reference the submodule in your Terraform configuration.

The full set of available modules and examples can be found in Terraform Registry modules for Databricks.

Get started with ETL

Getting started with Terraform Databricks examples

To use one of the different available Terraform Databricks modules, you should follow these steps:

- Reference this module using one of the different module source types

- Add a

variables.tffile with the required inputs for the module - Add a

terraform.tfvarsfile and provide values to each defined variable - Add an

output.tffile with the module outputs - (Strongly recommended) Configure your remote backend

- Run

terraform initto initialize terraform and to download the needed providers. - Run

terraform validateto validate the configuration files in your directory. - Run

terraform planto preview the resources that Terraform plans to create. - Run

terraform applyto create the resources.

Example walkthrough

In this section we demonstrate how to use the examples provided in the Databricks Terraform registry modules page. Each example is independent of each other and has a dedicated README.md. We now use this Azure Databricks example adb-vnet-injection to deploy a VNet-injected Databricks Workspace with an auto scaling cluster.

Step 1: Authenticate to the providers.

Navigate to providers.tf to check for providers used in the example, here we need to configure authentication to Azure provider and Databricks providers. Read the following docs for extensive information on how to configure authentication to providers:

- Azurerm provider authentication methods

- Databricks provider authentication methods and Azure documentation

Step 2: Read through the readme of the example, prepare input values.

The identity that you use in az login to deploy this template should have a contributor role in your azure subscription, or the minimum required permissions to deploy resources in this template.

Then do following steps:

- Run

terraform initto initialize terraform and download required providers. - Run

terraform planto see what resources will be deployed. - Run

terraform applyto deploy resources to your Azure environment.

Since we usedaz loginmethod to authenticate to providers, you will be prompted to login to Azure via browser. Enteryeswhen prompted to deploy resources.

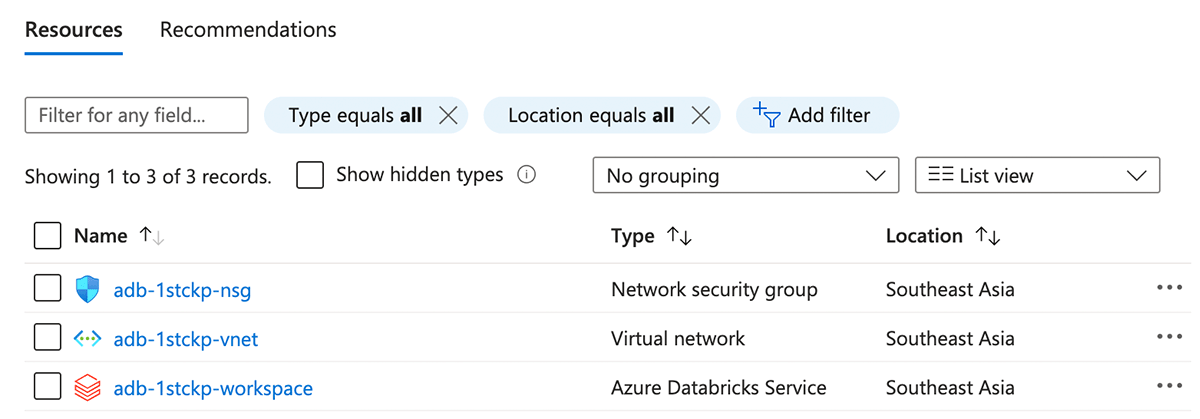

Step 3: Verify that resources were deployed successfully in your environment.

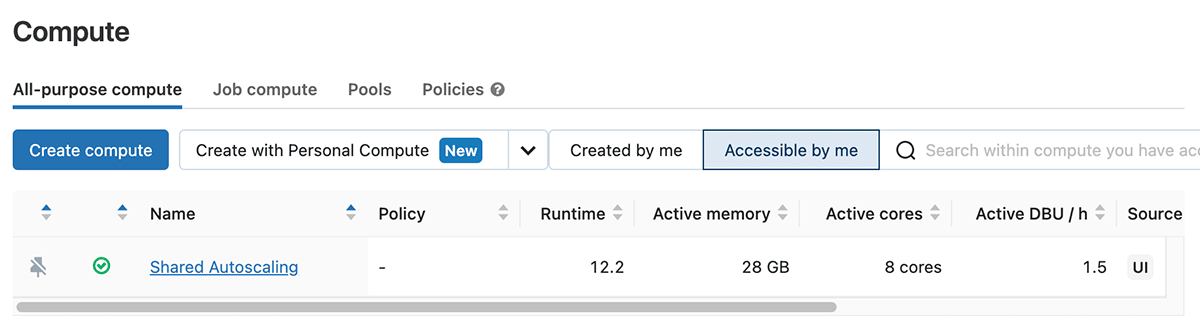

Navigate to Azure Portal and verify that all resources were deployed successfully. You should now have a vnet-injected workspace with one cluster deployed.

You can find more examples and their requirements, provider authentications in this repository under /examples.

How to contribute

Terraform-databricks-examples content will be continuously updated with new modules covering different architectures and also more features of the Databricks platform.

Note that it is a community project, developed by Databricks Field Engineering and is provided as-is. Databricks does not offer official support. In order to add new examples or new modules, you can contribute to the project by opening a new pull-request. For any issue, please open an issue under the terraform-databricks-examples repository.

Please look into the current list of available modules and examples and try them to deploy your Databricks infrastructure!