Deep Learning Tutorial Demonstrates How to Simplify Distributed Deep Learning Model Inference Using Delta Lake and Apache Spark™

On October 10th, our team hosted a live webinar—Simple Distributed Deep Learning Model Inference—with Xiangrui Meng, Software Engineer at Databricks.

Model inference, unlike model training, is usually embarrassingly parallel and hence simple to distribute. However, in practice, complex data scenarios and compute infrastructure often make this "simple" task hard to do from data source to sink.

In this webinar, we provided a reference end-to-end pipeline for distributed deep learning model inference using the latest features from Apache Spark and Delta Lake. While the reference pipeline applies to various deep learning scenarios, we focused on image applications, and demonstrated specific pain points and proposed solutions.

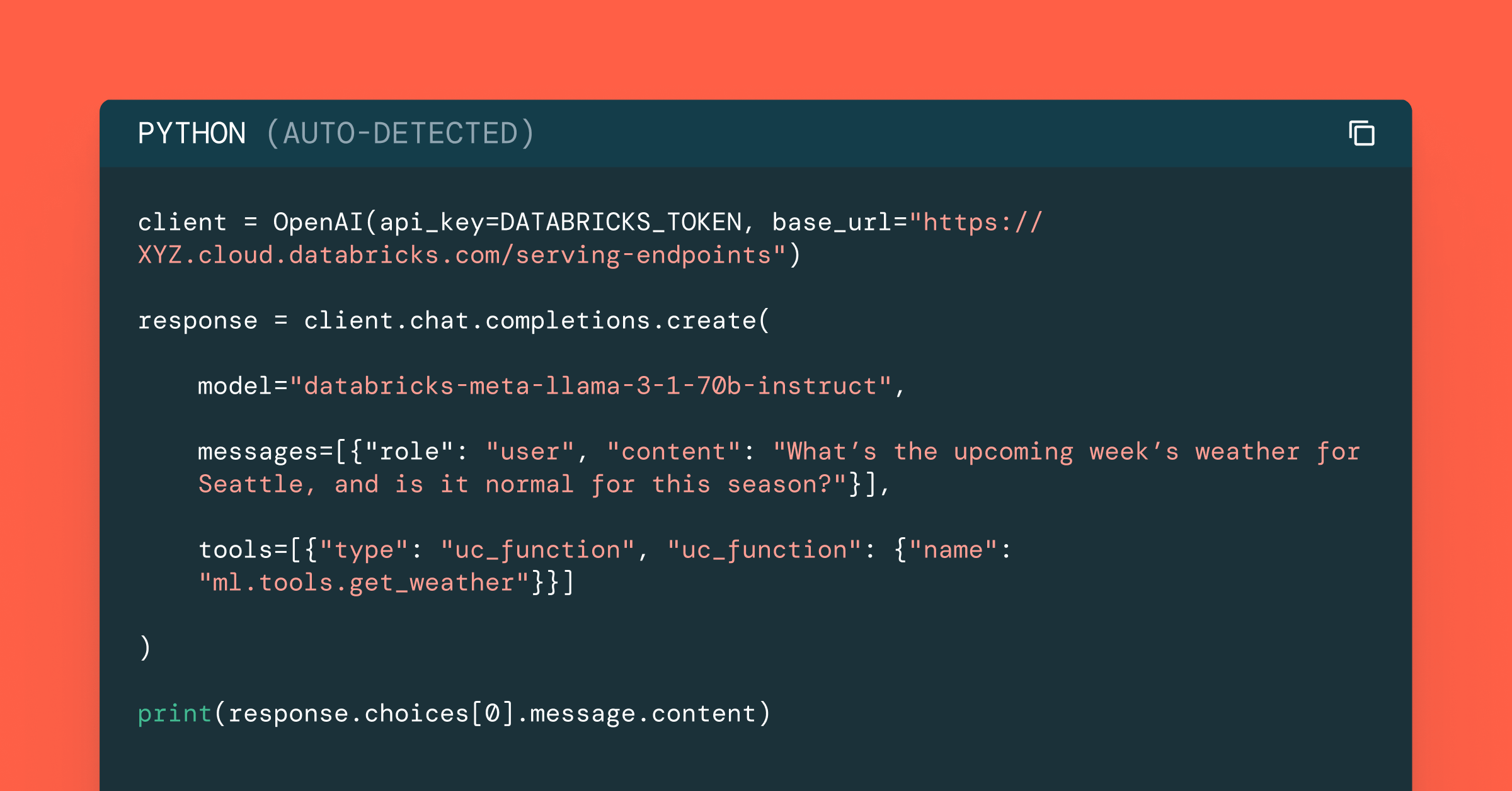

The walkthrough starts from data ingestion and ETL, using binary file data source from Apache Spark to load and store raw image files into a Delta Lake table. A small code change then enables Spark structure streaming to continuously discover and import new images, keeping the table up-to-date. From the Delta Lake table, Pandas UDF is used to wrap single-node code and perform distributed model inference in Spark.

We demonstrated these concepts using these Simple Distributed Deep Learning Model Inference Notebooks and Tutorials.

Here are some additional deep learning tutorials and resources available from Databricks.

- Deep Learning Documentation

- Deep Learning Fundamental Series

- Simple Steps to Distributed Deep Learning

If you’d like free access Databricks Unified Analytics Platform and try our notebooks on it, you can access a free trial here.

Never miss a Databricks post

Sign up

What's next?

Data Science and ML

October 1, 2024/5 min read