MLflow for Bayesian Experiment Tracking

This post is the third in a series on Bayesian inference ([1], [2] ). Here we will illustrate how to use managed MLflow on Databricks to perform and track Bayesian experiments using the Python package PyMC3. This results in systematic and reproducible experimentation ML pipelines that can be shared across data science teams due to the version control and variable tracking features. The data tracked by MLflow can either be accessed through the managed service provided through Databricks either using the UI or the API. Data scientists who are not using the managed MLflow service can use the API to access the experiments and the associated data. On Databricks, access to the data and the different models are managed through the ACL that MLflow provides. The models can then be easily productionized and deployed through a variety of frameworks.

Tracking Bayesian experiments

What does MLflow do?

MLflow is an open-source framework for managing your ML lifecycle. MLflow can either be used using the managed service on Databricks or can be installed as a stand-alone deployment using the open-source libraries available. This post primarily deals with experiment tracking, but we will also share how MLflow can help with storing the trained models in a central repository along with model deployment. In the context of tracking, MLflow allows you to store:

- Metrics -- usually related to the model performance, such as deviance or Rhat.

- Parameters -- variables that help to define your model or run. In a Bayesian setting, this can be your hyperparameter, prior or hyperprior distribution parameters. Note that these are always stored as string values.

- Tags -- key-value pairs to keep track of information regarding your run, such as the information regarding a major revision of the code to add a feature.

- Notes -- any information regarding your run that you can enter in the MLflow UI. This can be a qualitative evaluation of the run results and can be quite a useful tool for systematic experimentation.

- Artifacts -- this stores a byproduct or output of your experiment such as files, images, etc.

Setting up a store for open-source MLflow

This section only applies to the open-source deployment of MLflow, since this is automatically taken care of with the hosted MLflow on Databricks. MLflow has a backend store and an artifact store. As the name indicates, the artifact store holds all the artifacts (including metadata) associated with a model run and everything else exists in the backend store. If you are running MLflow locally, you can configure this backend store, which can be a file store or a database-backed store. You can run a tracking server anywhere if you so choose, as shown below:

You can then specify the tracking server to be the one you set above as:

The workflow for tracking a Bayesian experiment

On Databricks, all of this is managed for you, minimizing the configuration time needed to get started on your model development workflow. However, the following should be applicable to both managed and opne-source MLflow deployments. MLflow creates an experiment, identified by an experiment ID, and each experiment consists of a series of runs identified using a run ID. Each run has the associated parameters and artifacts logged per run. Here are the steps to create a workflow:

- Create an experiment by passing the path to the folder of the experiments, this returns an experiment ID. You can provide a path to store your artifacts, such as files, images etc.

- Start the experiment with the experiment ID returned from the above step. The PyMC3 inference code is under this context manager.

- Use tags to version your code and data used.

- Log the model/ run parameters, specifically the prior and hyperprior distribution parameters, the number of samples and tuning samples and the likelihood distribution.

- Once the model has finished the sampling, the results contained in the trace can be saved as an artifact using the log_artifacts() method. This will be a folder called ‘trace’ that contains all the information regarding the samples that were drawn by each of the chains. The trace information can be summarized by invoking the PyMC3 summary() method on the trace object. The trace summary is a data frame that can be saved as a JSON string object using the log_text() method from MLflow.

Inspecting an experiment

Once the experiment has completed, you can go back and inspect the MLflow UI or programmatically extract the run information. For example, if the current experiment ID is ‘10618537’, you can extract the information about the experiment:

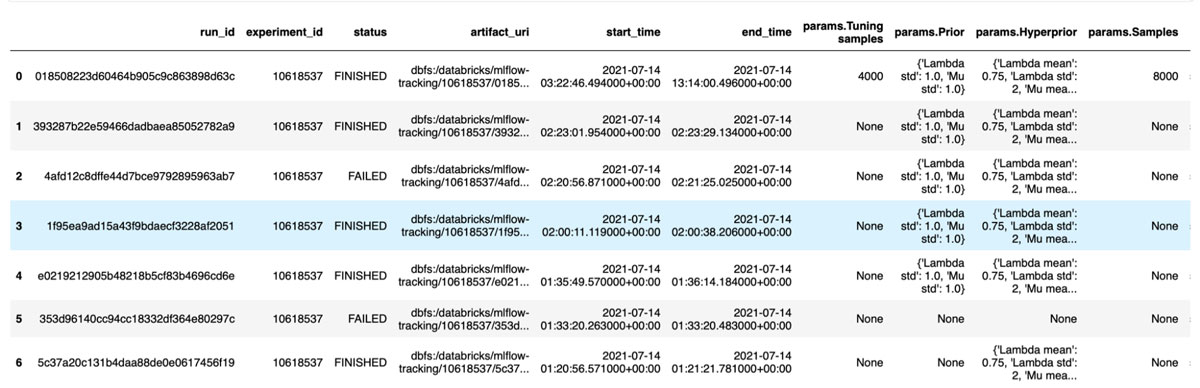

Search for an experiment run

Assuming that you know your experiment ID, you can search for all the runs within an experiment and extract the data stored for this run, as indicated below:

Accessing the artifacts from a run

The artifacts associated with this run can be listed as shown below. The file size and path are shown for each file

MLflow manages the artifacts for each run, however one can either view and download them using the UI or use the API to access them. In the example below, we load the trace information and the trace summary from a prior run.

If you run the above, you would notice that the trace summary contains the same information as before. The estimates of the parameters that were loaded from the artifacts file or the trace summary, as indicated by their distributions, now become the parameters of the current models. If desired, one can continue to fit new data to our model by using the currently estimated posteriors as the priors for a future training cycle.

Conclusion

In this post, we have seen how one can use MLflow to systematically perform Bayesian experiments using PyMC3. The logging and tracking functionality provided by MLflow can be accessed either through the managed MLflow provided by Databricks or for open-source users through the API. Models and model summaries can be saved as artifacts and can be shared or reloaded into PyMC3 at a later time.

To learn more, please check out the attached notebook.

Check out the notebook to learn more about managed MLflow for Bayesian experiments. Learn more about Bayesian inference in my Coursera courses:

- Bayesian Inference with MCMC

- Introduction to PyMC3 for Bayesian Modeling and Inference

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.