Azure Databricks를 이용한 데이터 유출 방지

데이터 유출을 방지하기 위해 안전한 Azure Databricks 아키텍처를 설정하는 방법에 대한 자세한 내용을 알아보세요

작성자: Ganesh Rajagopal, Bruce Nelson , 바빈 쿠카디아

마지막 업데이트: 2025년 10월 30일

필수 자료

시작하기 �전에 다음 주제에 익숙해졌는지 확인하십시오.

- Azure Databricks 서버리스 컴퓨팅 아키텍처

- 주요 Databricks 용어

- 프론트엔드 및 백엔드 Azure Databricks Private Link(PL)란 무엇인가요? ?

- Private Link 사용 워크스페이스 요구 사항

- Azure 워크스페이스용 서비스 엔드포인트란 무엇인가요?

- 인바운드 컨트롤러 IP 액세스 목록

- 보안 클러스터 연결

- Databricks 네트워킹

- Unity Catalog

Databricks Lakehouse Platform은 엔터프라이즈급 데이터 솔루션을 대규모로 구축, 배포, 공유 및 유지 관리하기 위한 통합 도구 세트를 제공합니다. Databricks는 클라우드 스토리지 및 보안과 통합되며, 사용자를 대신하여 클라우드 인프라를 관리하고 배포합니다.

이 문서의 전반적인 목표는 다음 위험을 완화하는 것입니다.

- Databricks 웹 애플리케이션을 사용하는 인터넷 또는 승인되지 않은 네트워크의 브라우저에서 데이터 액세스.

- Databricks API를 사용하는 인터넷 또는 승인되지 않은 네트워크의 클라이언트에서 데이터 액세스.

- Azure Private Link 또는 서비스 엔드포인트를 사용하는 인터넷 또는 승인되지 않은 네트워크의 클라이언트에서 데이터 액세스.

- Azure Databricks 클러스터의 손상된 워크로드가 Azure 또는 인터넷의 승인되지 않은 스토리지 리소스에 데이터를 쓰는 것.

Azure Databricks는 1등급 서비스이며 전송 중 및 저장 중인 데이터를 보호하는 데 도움이 되는 Azure의 기본 도구 및 서비스를 지원합니다. Azure Databricks는 사용자 정의 경로, 방화벽 규칙 및 네트워크 보안 그룹과 같은 네트워크 보안 제어를 지원합니다.

이 블로그의 기술적 목표 외에도 다음과 같은 사항을 고려한 개념을 제시하고자 합니다.

- 단순성: 모든 보안 설계는 잘 이해되고 유지 관리 가능해야 하며 조직의 기술 세트 내에 있어야 합니다. 구현되었지만 완전히 이해되지 않은 보안 솔루션은 의도치 않게 손상될 수 있습니다.

- 솔루션의 운영 비용은 항상 고려해야 합니다. 비용이 너무 높아 보안 설계가 포기되면 솔루션이 효과적이지 않은 것입니다. 보안은 비용을 의식하고 지속 ��가능해야 합니다.

비용 절감 또는 비용 문제 영역을 지적하고 가능한 한 이유와 작동 방식을 명확히 하도록 노력하겠습니다.

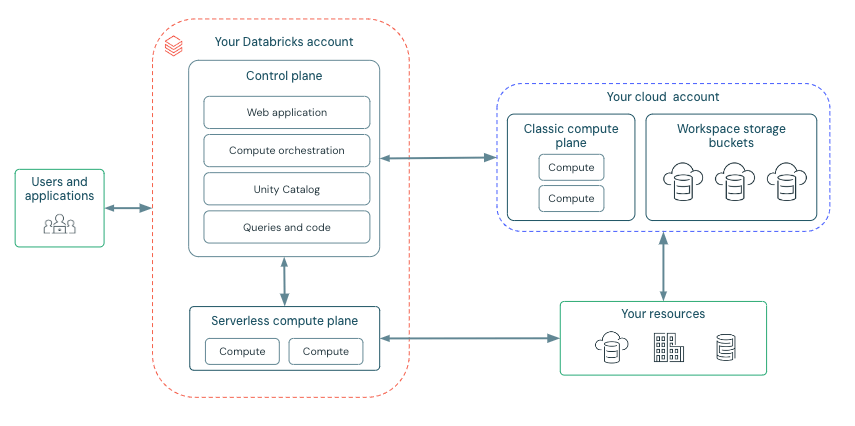

시작하기 전에 Azure Databricks 배포 아키텍처를 여기에서 간단히 살펴보겠습니다:

Azure Databricks는 팀 간의 안전한 협업을 촉진하도록 구성되어 있으며, 데이터 과학, 데이터 분석 및 데이터 엔지니어링에 집중할 수 있도록 많은 백엔드 서비스를 관리합니다.

Azure Databricks는 두 가지 주요 구성 요소인 제어 평면과 컴퓨팅 평면을 중심으로 구성됩니다.

제어 평면:

Databricks가 자체 Azure 계정 내에서 관리하는 Azure Databricks 제어 평면은 플랫폼의 핵심 인텔리전스 역할을 합니다. 사용자 인증, 클러스터 및 작업 오케스트레이션, 워크스페이스 관리를 위한 백엔드 서비스를 제공하며, 서비스 상호 작용을 위한 웹 인터페이스 및 API 엔드포인트를 제공합니다.

컴퓨팅 리소스의 수명 주기를 오케스트레이션하지만 데이터를 직접 처리하지는 않습니다. 대신 제어 평면은 고객의 Azure 구독 또는 서버리스 배포의 Databricks 테넌트 내에서 작동하는 별도의 컴퓨팅 평면으로 데이터 처리를 지시합니다. 노트북 명령 및 기타 많은 워크스페이스 구성은 제어 평면에 저장되며 저장 시 암호화됩니다 .

컴퓨팅 평면:

컴퓨팅 평면은 데이터 처리를 담당합니다. 사용되는 컴퓨팅의 특정 유형(서버리스 또는 클래식)은 선택한 컴퓨팅 리소스 및 워크스페이스 구성에 따라 달라집니다. 서버리스 및 클래식 컴퓨팅은 기본 워크스페이스 스토리지(dbfs) 및 Azure 테넌트에 연결된 관리 ID와 같은 일부 리소스를 공유합니다.

서버리스 컴퓨팅

서버리스 컴퓨팅의 경우 리소스는 Databricks에서 관리하는 Azure의 컴퓨팅 평면 내에서 작동합니다. Azure Databricks는 프로비저닝, 확장 및 유지 관리를 포함한 거의 모든 기본 인프라를 처리합니다. 이 접근 방식은 다음을 제공합니다.

- 간소화된 작업: 사용자는 클러스터 또는 가상 머신을 관리할 필요 없이 데이터 엔지니어링 및 데이터 과학 작업에 집중할 수 있습니다.

- 비용 효율성: 사용자는 워크로드 실행 중에 적극적으로 소비된 컴퓨팅 리소스에 대해서만 요금이 청구되므로 유휴 클러스터와 관련된 비용이 제거됩니다.

서버리스 리소스는 필요에 따라 사용할 수 있어 유휴 시간 비용이 절감됩니다. 또한 여러 계층의 보안 및 네트워크 제어가 있는 Azure Databricks 계정의 보안 네트워크 경계 내에서 실행됩니다.

클래식 Azure Databricks 컴퓨팅

클래식 Azure Databricks 컴퓨팅을 사용하면 리소스가 Azure 클라우드 테넌트 내에 있습니다. 이를 통해 고객 관리 컴퓨팅을 사용할 수 있으며, Databricks 클러스터는 Databricks 테넌트가 아닌 Azure 구독 내의 리소스에서 실행됩니다. 이를 통해 다음을 제공합니다.

- 자연스러운 격리: 작업은 자체 Azure 구독 및 가상 네트워크 내에서 발생합니다.

- 보안 연결: 사용자가 관리하고 제어하는 서비스 엔드포인트 또는 프라이빗 엔드포인트를 통해 다른 Azure 서비스에 대한 안전한 연결을 활성화합니다.

중요 참고: 클래식 SQL 웨어하우스를 포함한 클래식 클러스터는 Azure 구독에서 리소스를 프로비저닝해야 하므로 서버리스 옵션보다 시작 시간이 더 길 수 있습니다.

서버리스 전용 Databricks 워크스페이스 배포(신규): 서버리스 전용 워크스페이스는 서버리스 컴퓨팅만 실행할 수 있는 워크스페이스입니다. 클래식 컴퓨팅이 없으므로 모든 시스템 리소스는 Azure Databricks에서 관리하며, 이는 워크스페이스 기본 스토리지까지 모든 기본 인프라를 처리합니다.

개략적인 아키텍처

네트워크 통신 경로

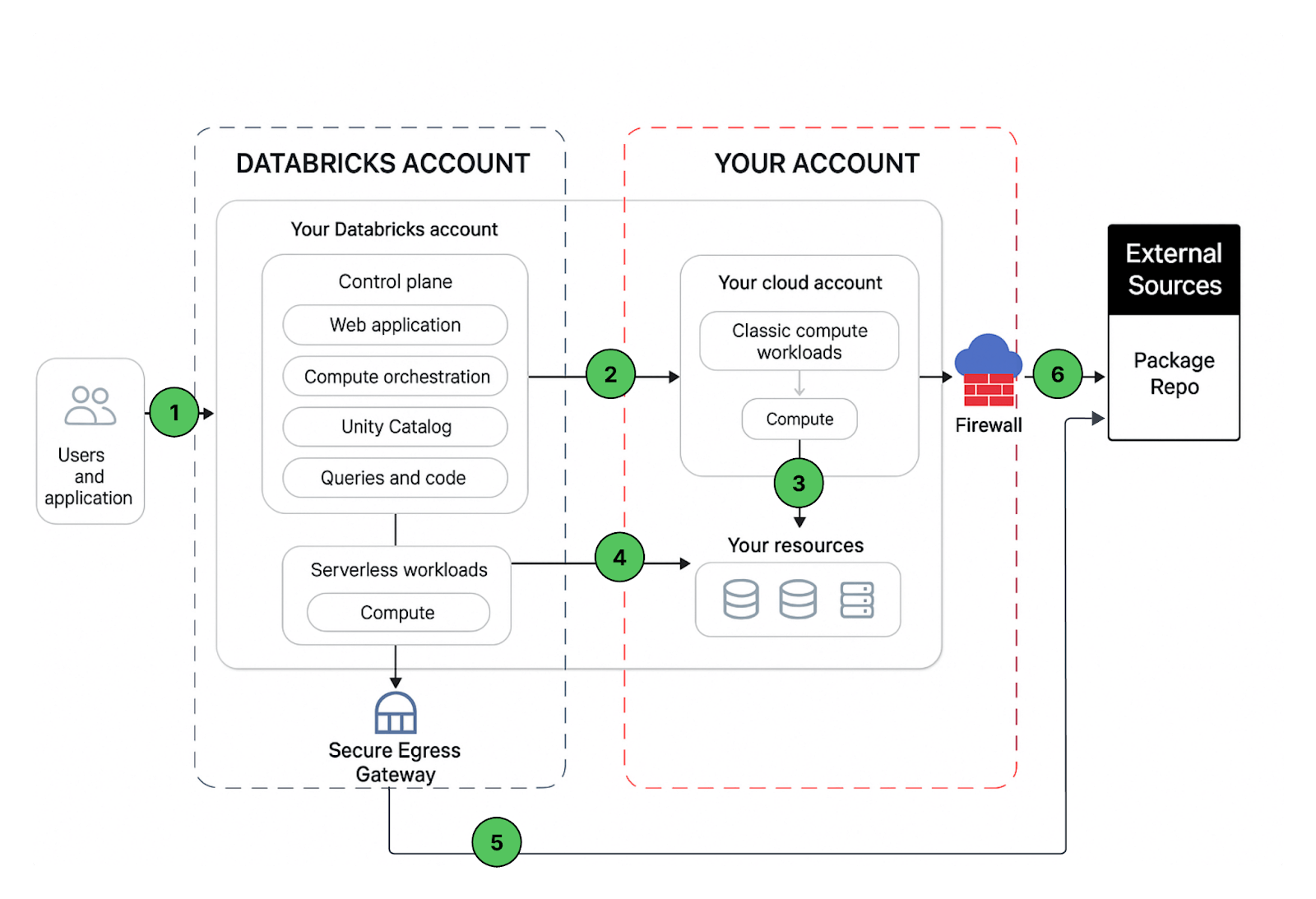

보안하려는 통신 경로를 이해해 보겠습니다. Azure Databricks는 아래와 같이 다양한 방식으로 사용자와 애플리케이션에서 사용할 수 있습니다.

Databricks 워크스페이스 배포에는 보안할 수 있는 다음 네트워크 경로가 포함됩니다.

- 사용자 또는 애플리케이션에서 Azure Databricks 웹 애플리케이션(워크스페이스 또는 Databricks REST API)으로

- Azure Databricks 클래식 컴퓨팅 평면 가상 네트워크에서 Azure Databricks 제어 평면 서비스로. 여기에는 보안 클러스터 연결 릴레이 및 REST API 엔드포인트에 대한 워크스페이스 연결이 포함됩니다.

- 클래식 컴퓨팅 평면에�서 스토리지 서비스(예: ADLS gen2, SQL 데이터베이스)로

- 서버리스 컴퓨팅 평면에서 스토리지 서비스(예: ADLS gen2, SQL 데이터베이스)로

- 네트워크 정책(아웃바운드 방화벽)을 통한 서버리스 컴퓨팅 평면의 보안 아웃바운드 트래픽을 외부 데이터 소스(예: pypi 또는 maven과 같은 패키지 리포지토리)로

- 아웃바운드 방화벽을 통한 클래식 컴퓨팅 평면의 보안 아웃바운드 트래픽을 외부 데이터 소스(예: pypi 또는 maven과 같은 패키지 리포지토리)로 (Azure에서 실행되는 모든 아웃바운드 어플라이언스, 예: Palo Alto)

최종 사용자 관점에서 항목 1은 인바운드 제어가 필요하고 항목 2~6은 아웃바운드 제어가 필요합니다.

이 문서에서는 Databricks 워크로드의 아웃바운드 트래픽 보안에 중점을 두고 제안된 배포 아키텍처에 대한 규범적 지침을 제공하며, 이를 수행하는 동안 인바운드(사용자/클라이언트에서 Databricks로) 트래픽을 보호하기 위한 모범 사례도 공유할 것입니다.

워크스페이스 배포 옵션

Azure Databricks 작업 영역을 온프레미스 또는 VPN 연결(인터넷 액세스 없음)에서 액세스할 수 있도록 안전하게 만드는 여러 가지 옵션이 있습니다. 모범 사례로, 전용 엔드포인트(Private Link)를 사용하여 작업 영역 액세스를 보호하는 것이 좋습니다. 이때 표준 또는 간소화된 배포를 사용합니다. 권장 옵션은 표준 배포입니다. 작업 영역은 Azure Portal 또는 All in one ARM 템플릿을 통해 배포하거나, 보안 모범 사례로 구성된 Databricks 작업 영역 및 클라우드 인프라 배포를 지원하는 보안 참조 아키텍처(SRA) Terraform 템플릿을 사용하여 배포할 수 있습니다.

프런트 엔드 대 백 엔드 전용 링크: 프런트 엔드 전용 링크는 사용자 대 작업 영역이라고도 합니다. 백 엔드 전용 링크는 컴퓨팅 평면 대 제어 평면이라고도 합니다.

표준 배포(권장): 보안 강화를 위해 Databricks는 별도의 전송 VNet에서 프런트 엔드(클라이언트) 연결을 위해 별도의 전용 엔드포인트를 사용하도록 권장합니다. 프런트 엔드 및 백 엔드 전용 링크 연결을 모두 구현하거나 백 엔드 연결만 구현할 수 있습니다. 별도의 VNet을 사용하여 사용자 액세스를 캡슐화하고, 클래식 데이터 평면의 컴퓨팅 리소스에 사용하는 VNet과 분리합니다. 백 엔드 및 프런트 엔드 액세스를 위해 별도의 전용 엔드포인트를 만듭니다. 표준 배포로 Azure Private Link 사용의 지침을 따릅니다.

이러한 서비스는 백 엔드 전용 엔드포인트를 통해 액세스할 수 없으므로 컴퓨팅 평면에서 시스템 스토리지, 메시징 및 메타데이터 액세스에 대한 추가 고려 사항이 필요합니다.

시스템 관리 스토리지 계정(클래식 컴퓨팅 평면만 해당): 이러한 스토리지 계정은 Databricks 클러스터를 부팅하고 모니터링하는 데 필요합니다. 이러한 스토리지 계정은 Databricks 테넌트에 있으며 서비스 엔드포인트 정책(권장)을 통해 허용해야 합니다. 대안으로는 스토리지 서비스 태그를 사용하는 것이 있지만 이는 너무 광범위하여 데이터를 유출하기 쉬우며, 또는 FQDN 또는 IP 주소의 개별 허용 목록(권장하지 않음)을 사용할 수 있습니다.

- 아티팩트: Databricks 런타임 이미지 > 11GB / 클러스터 노드 읽기 전용

- 로깅: 감사 로깅을 포함한 읽기/쓰기 집약적인 메시징.

- 시스템 테이블: 감사, UC 및 시스템 데이터 읽기 전용.

작업 영역 기본 스토리지(DBFS): 임시 공간, 서비스, 임시 SQL 결과(클라우드 페치), 드라이버에 사용되는 일반 분산 파일 시스템입니다. 클래식 컴퓨팅의 경우 전용 DBFS 기능을 사용하고 서버리스 컴퓨팅의 경우 서비스 엔드포인트 또는 전용 엔드포인트를 사용하여 전용 엔드포인트를 통해 보호할 수 있습니다.

메시징: (이벤트 허브, 클래식 컴퓨팅 평면만 해당) 계보 추적 및 기타 가벼운 메시징에 사용되는 공개적으로 액세스 가능한 리소스입니다. UDR 및/또는 방화벽에서 이벤트 허브 서비스 태그를 통해 허용할 수 있습니다.

메타데이터: (SQL, 클래식 컴퓨팅 평면만 해당): 레거시 Hive 메타스토어 트래픽에 사용되는 공개적으로 액세스 가능한 리소스입니다.

사용자 수준 스토리지 계정 액세스: 시스템 데이터가 아닌 고객 데이터에 사용되는 ALDS 및 Blob Storage 계정.

첫 번째 파티 리소스: Cosmos DB, Azure SQL, DataFactory 등…

외부 리소스: S3, BigQuery , Snowflake 등…

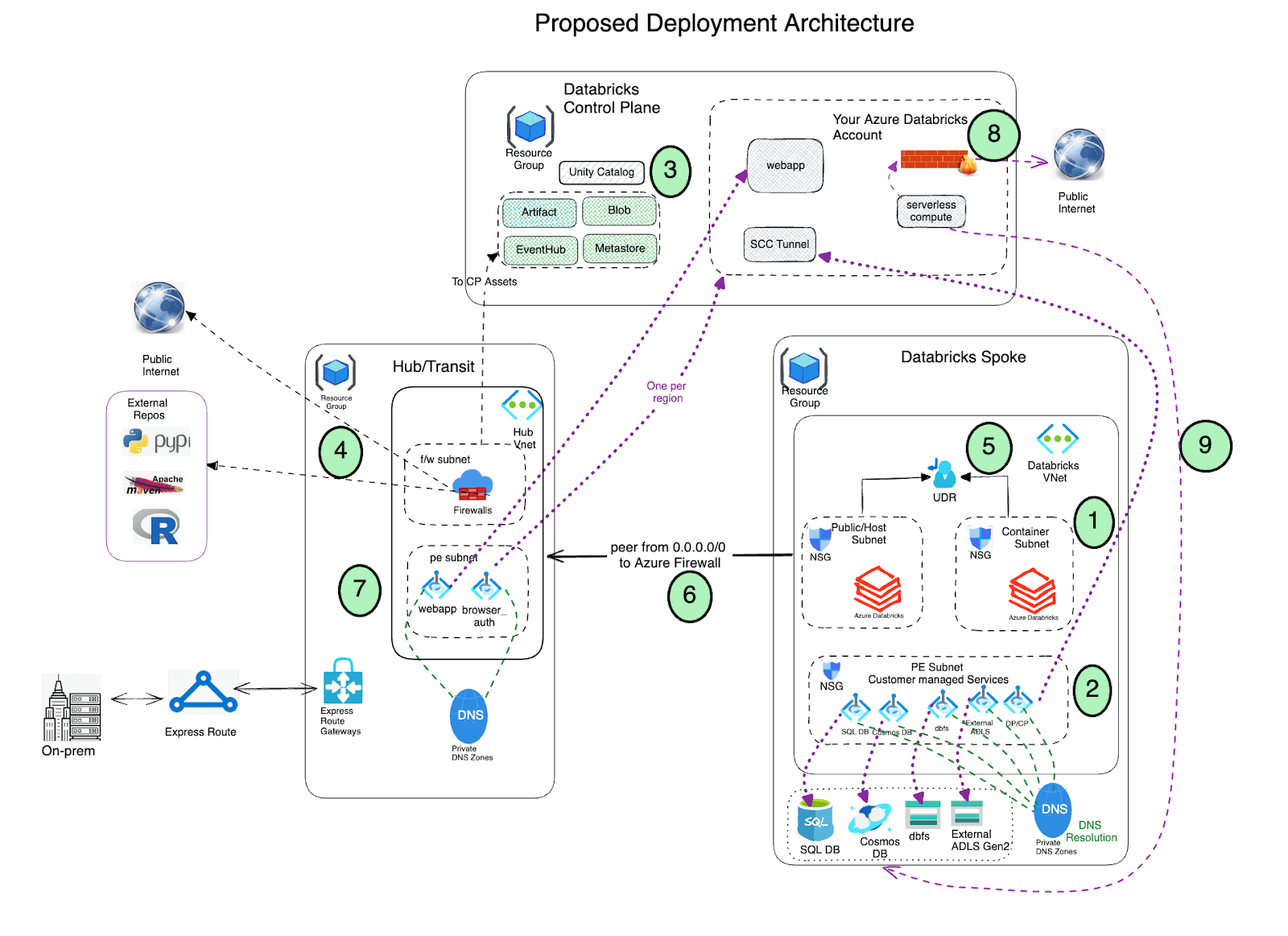

데이터 유출 보호 개요 아키텍처

허브 및 스포크 참조 아키텍처를 권장합니다. 이 모델에서는 허브 가상 네트워크가 검증된 소스 및 선택적으로 온프레미스 환경에 연결하는 데 필요한 공유 인프라를 호스트합니다. 스포크 가상 네트워크는 허브와 피어링되며 다양한 비즈니스 단위 또는 팀을 위한 격리된 Azure Databricks 작업 영역을 포함합니다.

이 허브-스포크 아키텍처를 사용하면 다양한 목적과 팀에 맞게 조정된 여러 스포크 VNet을 만들 수 있습니다. 단일 대규모 가상 네트워크 내에서 팀별로 별도의 서브넷을 만들어 격리를 달성할 수도 있습니다. 이러한 경우 자체 서브넷 쌍 내의 각기 다른 격리된 Azure Databricks 작업 영역을 만들고 동일한 가상 네트워크 내의 별도 서브넷에 Azure 방화벽을 배포할 �수 있습니다.

사전 요구 사항

| 항목 | 세부 정보 |

|---|---|

| 가상 네트워크 |

|

| 서브넷 | 세 개의 서브넷: 호스트(공용), 컨테이너(개인) 및 전용 엔드포인트 서브넷(스토리지, DBFS 및 사용할 수 있는 기타 Azure 서비스에 대한 전용 엔드포인트를 보유). |

| 라우팅 테이블 | Databricks 서브넷에서 네트워크 어플라이언스, 인터넷 또는 온프레미스 데이터 소스로 나가는 트래픽을 채널링합니다. |

| Azure 방화벽 | 나가는 모든 트래픽을 검사하고 허용/거부 정책에 따라 작업을 수행합니다. |

| 전용 DNS 영역 | 가상 네트워크 내의 도메인 이름을 관리하고 확인하는 안정적이고 안전한 DNS 서비스를 제공합니다(사용할 수 없는 경우 배포 중에 자동으로 생성될 수 있음). |

| 서비스 엔드포인트 정책 | 작업 영역 스토리지 계정(dbfs), 아티팩트 및 로깅 스토리지, 시스템 테이블에 대한 시스템 스토리지와 같은 비전용 엔드포인트 기반 스토리지 계정에 대한 액세스를 허용하는 정책입니다. |

| Azure Key Vault | DBFS, 관리 디스크 및 관리 서비스를 암호화하기 위한 CMK를 저장합니다. |

| Azure Databricks 액세스 커넥터 | Unity Catalog를 사용하도록 설정한 경우 필요합니다. Unity Catalog에 등록된 데이터에 액세스하기 위해 관리 ID를 Azure Databricks 계정에 연결합니다. |

| 방화벽에서 허용 목록에 추가할 Azure Databricks 서비스 목록 | 이 공개 문서를 따르고 Databricks 배포와 관련된 모든 IP 주소 및 도메인 이름을 나열하십시오. |

배포 아키텍처

- 스포크 가상 네트워크에 보안 클러스터 연결(SCC)을 사용하도록 설정하고 VNet 주입 및 전용 링크를 사용하여 Azure Databricks를 배포합니다.

- 가상 네트워크에는 각 Azure Databricks 작업 영역에 전용인 개인 서브넷과 공용 서브넷의 두 서브넷이 포함되어야 합니다(다른 명명 규칙을 사용해도 됩니다). 이러한 서브넷과 Azure Databricks 작업 영역 간에는 일대일 관계가 있습니다. 동일한 서브넷 쌍에서 여러 작업 영역을 공유할 수 없으며 각기 다른 작업 영역에 대해 새 서브넷 쌍을 사용해야 합니다.

- Azure Databricks는 로그 및 원격 분석을 저장하는 데 사용되는 배포 프로세스 중에 기본 Blob 스토리지(루트 스토리지라고도 함)를 생�성합니다. 이 스토리지에 대한 공개 액세스가 활성화되어 있더라도 이 스토리지에 생성된 거부 할당은 스토리지에 대한 직접적인 외부 액세스를 금지합니다. Databricks 작업 영역을 통해서만 액세스할 수 있습니다. Azure Databricks 배포는 이제 기본 작업 영역 스토리지 계정(DBFS)에 대한 전용 연결을 지원합니다.

- 중요: 모범 사례로 루트 컨테이너(DBFS) 스토리지에 애플리케이션 데이터를 저장하는 것은 권장되지 않습니다. DBFS 루트 컨테이너에 대한 액세스는 이제 비활성화할 수 있으며 대신 Unity Catalog 볼륨을 사용하는 것이 좋습니다. Unity Catalog 볼륨은 DBFS 루트 스토리지에 대한 최신 거버넌스 및 보안을 제공합니다.

- Azure Data Services (Storage accounts, Eventhub, SQL databases 등)에 대한 Private Link 엔드포인트를 Azure Databricks 스포크 가상 네트워크 내의 별도 서브넷에 설정합니다. 이렇게 하면 모든 워크로드 데이터에 기본 데이터 유출 방지 기능이 적용된 상태로 Azure 네트워크 백본을 통해 안전하게 액세스할 수 있습니다(자세한 내용은 이 블로그 참조). 또한 일반적으로 Azure Databricks 작업 영역을 호스팅하는 가상 네트워크와 피어링된 다른 가상 네트워크에 이러한 엔드포인트를 배포해도 괜찮습니다. Private Endpoints는 추가 비용이 발생하며, Azure 데이터 서비스에 액세스하기 위해 Private Endpoints 대신 (조직의 보안 정책에 따라) Service Endpoints를 활용하는 것이 좋습니다. 특히 보안 스토리지 계정 액세스를 위해 Service Endpoint Policies를 사용할 수 있습니다.

- 통합 거버넌스 솔루션을 위해 Azure Databricks Unity Catalog를 활용합니다.

Azure Firewall(또는 기타 네트워크 가상 어플라이언스)을 허브 가상 네트워크에 배포합니다. Azure Firewall을 사용하면 다음을 구성할 수 있습니다.

- 방화벽을 통해 액세스할 수 있는 정규화된 도메인 이름(FQDN)을 정의하는 애플리케이션 규칙. Azure Databricks 제어 평면 리소스(예: 제어 평면, 웹 앱 및 SCC 릴레이)에는 애플리케이션 규칙을 사용하는 것이 좋습니다.

- FQDN으로 구성할 수 없는 엔드포인트의 IP 주소, 포트 및 프로토콜을 정의하는 네트워크 규칙. 필요한 Azure Databricks 트래픽 중 일부는 네트워크 규칙을 사용하여 허용 목록에 추가해야 합니다.

Azure Firewall 대신 타사 방화벽 어플라이언스를 사용하는 경우에도 작동합니다. 그러나 각 제품에는 고유한 특성이 있으므로 관련 제품 지원 및 네트워크 보안 팀과 협력하여 문제를 해결하는 것이 좋습니다.

- 프라이빗 엔드포인트가 작업 영역에 대해 활성화된 경우 AzureDatabricks 서비스 태그는 필요하지 않습니다.

- 서비스 엔드포인트 정책을 사용할 때 방화벽에서 Databricks 서비스 스토리지 계정(아티팩트, 로깅 및 시스템 테이블)에 대한 네트워크 규칙이 필요하지 않습니다. 또한 스토리지 서비스 태그는 필요하거나 권장되지 않습니다.

- Azure Databricks는 NTP 서비스, CDN, cloudflare, GPU 드라이버 및 데모 데이터 세트를 위한 외부 스토리지에 추가 호출을 하여 적절하게 허용 목록에 추가해야 합니다.

Databricks 컴퓨팅 평면 서브넷의 비로컬 네트워크 트래픽은 사용자 정의 경로(예: 기본 경로 0.0.0.0/0)를 사용하여 Azure Firewall과 같은 나가는 어플라이언스를 통해 라우팅되어야 합니다. 이렇게 하면 모든 나가는 트래픽이 검사됩니다. 그러나 프라이빗 엔드포인트를 사용하는 제어 평면으로의 나가는 트래픽은 이러한 라우팅 테이블 및 나가는 어플라이언스를 우회합니다. 그러나 SQL, Event Hubs 및 스토리지와 같은 다른 제어 평면 구성 요소는 나가는 어플라이언스를 통해 라우팅됩니다.

- Databricks 서비스 스토리지 계정(아티팩트, 로깅 및 시스템 테이블)의 경우 잠재적인 스로틀링을 피하고 데이터 전송 비용을 줄이기 위해 나가는 어플라이언스(NVA 또는 방화벽)를 우회하는 옵션을 고려할 수 있습니다. 아티팩트 스��토리지에 대한 액세스만으로도 클러스터 노드당 최대 11GB가 다운로드될 수 있습니다. 스토리지에 대한 서비스 엔드포인트를 서비스 엔드포인트 정책과 함께 사용하는 것이 좋습니다. 이러한 정책은 작업 영역이 연결된 정책을 통해 서브넷에서 지정된 아티팩트, 로깅 및 시스템 테이블 스토리지 계정에만 액세스할 수 있도록 합니다. 서비스 엔드포인트 정책은 프라이빗 링크가 아닌 다른 스토리지 계정 액세스와도 호환됩니다. 서비스 엔드포인트 정책을 사용하면 스토리지 서비스 태그가 필요하거나 권장되지 않습니다.

- 또는 라우팅 테이블에 서비스 태그 규칙을 추가하여 제어 평면 자산으로의 나가는 트래픽을 인터넷으로 직접 라우팅하여 방화벽을 우회할 수 있습니다. 이렇게 하면 네트워크 가상 어플라이언스와 관련된 스로틀링 및 추가 데이터 전송 비용을 피하는 데 도움이 될 수 있습니다.

중요 고려 사항: 이렇게 하면 의도한 대상뿐만 아니라 해당 지역의 스토리지 계정 및 서비스로 나가는 트래픽이 허용됩니다. 이는 보안 아키텍처를 설계할 때 신중하게 고려해야 할 중요한 요소입니다.

- Azure Databricks 스포크 및 Azure Firewall 허브 가상 네트워크 간에 가상 네트워크 피어링을 구성합니다.

- 허브 VNet(프라이빗 엔드포인트 서브넷)에 프런트 엔드 및 브라우저 인증(SSO용)에 대한 프라이빗 엔드포인트를 배포합니다.

- 서버리스 컴퓨팅 네트워크 정책을 구성하여 나가는 네트워크 트래픽을 제어합니다. 서버리스 컴퓨팅은 Azure Databricks 계정에 연결되어 있습니다.

- Azure Databricks 네트워크 연결 구성(NCC)을 구성하여 Azure Private Link를 사용하여 서버리스 컴퓨팅 리소스와 Azure 스토리지 서비스(ADLS Gen2 및 SQL Database 등) 간의 안전한 연결을 설정합니다.

데이터 유출 방지 아키텍처에 대한 일반적인 질문

Azure Data Services로의 데이터 유출을 보호하기 위해 서비스 엔드포인트를 사용할 수 있나요?

예, 서비스 엔드포인트는 최적화된 경로를 통해 고객이 소유하고 관리하는 Azure 서비스(예: ADLS gen2, Azure KeyVault 또는 eventhub)에 안전하고 직접적인 연결을 제공합니다. 서비스 엔드포인트는 외부 Azure 리소스에 대한 연결을 가상 네트워크로만 제한하는 데 사용할 수 있습니다.

Databricks 관리 스토리지 서비스에 서비스 엔드포인트 정책을 사용할 수 있나요?

예, 서비스 엔드포인트 정책은 2025년 10월 1일부로 공개 미리 보기로 제공됩니다. 참조: 클래식 컴퓨팅에서 스토리지 액세스를 위한 Azure 가상 네트워크 서비스 엔드포인트 정책 구성

Azure Firewall 외에 네트워크 가상 어플라이언스(NVA)를 사용할 수 있나요?

예, 네트워크 트래픽 규칙이 이 문서에 설명된 대로 구성된 경우 타사 NVA를 사용할 수 있습니다. 이 설정은 Azure Firewall로만 테스트했지만 일부 고객은 다른 타사 어플라이언스를 사용합니다. 온프레미스보다는 클라우드에 어플라이언스를 배포하는 것이 이상적입니다.

Azure Databricks와 동일한 가상 네트워크에 방화벽 서브넷을 가질 수 있나요?

예, 가능합니다. Azure 참조 아키텍처에 따라 향후 계획을 더 잘 세우기 위해 허브-스포크 가상 네트워크 토폴로지를 사용하는 것이 좋습니다. Azure Firewall 서브넷을 Azure Databricks 작업 영역 서브넷과 동일한 가상 네트워크에 생성하기로 선택한 경우 위 6단계에서 설명한 가상 네트워크 피어를 구성할 필요가 없습니다.

Azure Databricks 제어 평면 SCC 릴레이 IP 트래픽을 Azure Firewall을 통해 필터링할 수 있나요?

예, 가능하지만 다음 사항을 염두에 두시기 바랍니다.

- Databricks 제어 평면에 대한 프라이빗 엔드포인트를 사용하는 경우 Azure Databricks �클러스터(데이터 평면)와 SCC 릴레이 서비스 간의 트래픽은 Azure 네트워크를 통해 비공개로 흐르며 공용 인터넷을 통과하지 않습니다. 이는 주로 Azure Databricks 작업 영역이 제대로 작동하는지 확인하기 위한 관리 트래픽입니다.

- Databricks 제어 평면에 대한 프라이빗 링크 액세스를 사용하지 않는 경우 SCC 릴레이 및 WebUI CIDR 범위는 AzureDatabricks 서비스 태그로 처리됩니다. 다른 방화벽/NVA 유형의 경우 Azure Databricks 서비스 및 에셋의 IP 주소 및 도메인의 최신 버전을 참조하십시오. 방화벽 규칙 구성에서 SCC 터널에 대한 애플리케이션 규칙 FDQN을 사용하는 것이 좋습니다.

- SCC Relay 서비스와 데이터 플레인 간에 안정적이고 신뢰할 수 있는 네트워크 통신이 필요합니다. 방화벽이나 가상 어플라이언스를 사이에 두면 단일 실패 지점이 발생할 수 있습니다. 예를 들어 방화벽 규칙 설정 오류나 예약된 다운타임이 발생하면 클러스터 부트스트랩에 과도한 지연(일시적인 방화벽 문제)이 발생하거나 새 클러스터를 생성하지 못하거나 작업 스케줄링 및 실행에 영향을 줄 수 있습니다.

Azure 방화벽에서 허용되거나 차단된 트래픽을 분석할 수 있나요?

예, 해당 요구 사항에는 Azure 방화벽 로그 및 메트릭 사용을 권장합니다.

기존 비 NPIP(관리형 Databricks 배포)를 NPIP 또는 PL 사용 가능 작업 영역으로 업그레이드할 수 있나요?

예, 관리형 Databricks 배포는 VNet 주입 작업 영역으로 업그레이드할 수 있습니다.

작업 영역당 두 개의 서브넷이 필요한 이유는 무엇인가요?

작업 영역에는 일반적으로 "호스트"(또는 "퍼블릭") 서브넷과 "컨테이너"(또는 "프라이빗") 서브넷이라고 하는 두 개의 서브넷이 필요합니다. 각 서브넷은 호스트(Azure VM)와 VM 내에서 실행되는 컨테이너(Databricks 런타임, 즉 dbr)에 IP 주소를 제공합니다.

퍼블릭 또는 호스트 서브넷에 퍼블릭 IP가 있나요?

보안 클러스터 연결(SCC)을 사용하여 작업 영역을 생성할 때 Databricks의 서브넷에는 퍼블릭 IP 주소가 없습니다. 호스트 서브넷의 기본 이름이 public-subnet일 뿐입니다. SCC는 외부 네트워크에서 Databricks 작업 영역 컴퓨팅 인스턴스 중 하나로 SSH와 같은 트래픽이 들어오지 않도록 합니다.

배포 후 서브넷 크기를 조정하거나 변경할 수 있나요?

예, 배포 후 서브넷 크기를 조정하거나 변경할 수 있습니다. 가상 네트워크를 변경하거나 서브넷 이름을 변경하는 것도 가능합니다. (게이트된 공개 미리 보기). Azure 지원팀에 문의하여 서브넷 크기 조정을 위한 지원 사례를 제출해 주세요.

배포 후 가상 네트�워크를 교체/변경할 수 있나요?

예, 공개 문서를 참조하세요.

(이 글은 AI의 도움을 받아 번역되었습니다. 원문이 궁금하시다면 여기를 클릭해 주세요)

최신 게시물을 이메일로 받아보세요

블로그를 구독하고 최신 게시물을 이메일로 받아보세요.