What is a Neural Network?

A computational model inspired by biological neural systems with interconnected nodes in layers that learn patterns by adjusting weights during training

- A neural network is a computing system made up of interconnected layers of nodes that learn patterns in data by adjusting the weights between neurons.

- Different neural network architectures, such as feedforward, recurrent and convolutional networks, are used for tasks like classification, speech and image recognition and natural language processing.

- Choosing a neural network involves deciding how many layers and neurons you need and how those layers connect based on the problem and data.

What is a Neural Network?

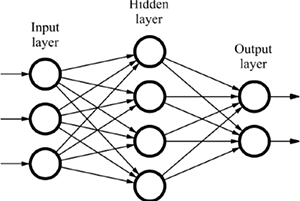

A neural network is a computing model whose layered structure resembles the networked structure of neurons in the brain. It features interconnected processing elements called neurons that work together to produce an output function. Neural networks are made of input and output layers/dimensions, and in most cases, they also have a hidden layer consisting of units that transform the input into something that the output layer can use.

Types of Neural Network Architectures:

Neural networks, also known as Artificial Neural network use different deep learning algorithms. Here are some the most common types of neural networks:

Feed-Forward Neural Network:

This is the most basic and common type of architecture; here the information travels in only one direction from input to output. It consists of an input layer; an output layer and in between, we have some hidden layers. If the hidden layer is more than one then that network is called a deep neural network.

The agentic AI playbook for the enterprise

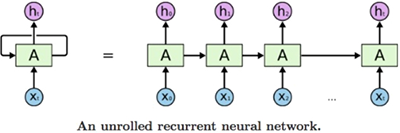

Recurrent Neural Network (RNNs)

This is a more complex type of network; this artificial neural network is commonly used in speech recognition and natural language processing (NLP). RNNs perform the same task for every element of a sequence, with the output being depended on the previous computations.

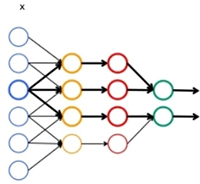

Convolutional Neural Network (ConvNets or CNNs)

A CNN has several layers through which data is filtered into categories. CNNs have proven to be very effective in areas such as image recognition, text language processing, and classification. A convolutional neural network is made of an input layer, an output layer and a hidden layer that includes multiple convolutional layers, pooling layers, fully connected layers, and normalization layers.

There are at least a dozen other kinds of neural network such as symmetrically connected network: Boltzmann machine networks, Hopfield networks,and many other types. Choosing the right network depends on the data you have to train it with, as well as on the specific application you have in mind.

Additional Resources

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.