Accelerating developers by ditching the data center

Guest blog by R Tyler Croy, Director of Platform Engineering at Scribd

People don’t tend to get excited about the data platform. It is often regarded much like road infrastructure: nobody thinks much about how vital it is for them to get from points A to B, unless it’s terribly bad. Imagine my surprise when I started to hear from users: “Wow this is amazing,” or “I can’t wait for my whole team to adopt it”, or “we’re really excited about it!” The enthusiasm doesn’t make the migration project any less challenging, but it certainly makes it more enjoyable.

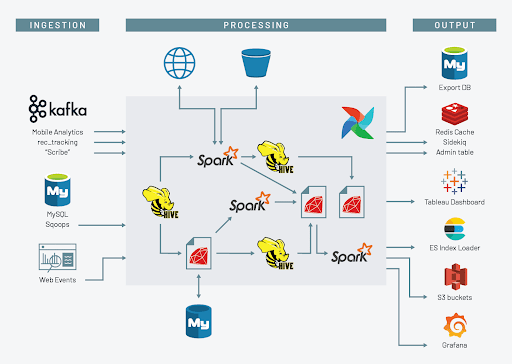

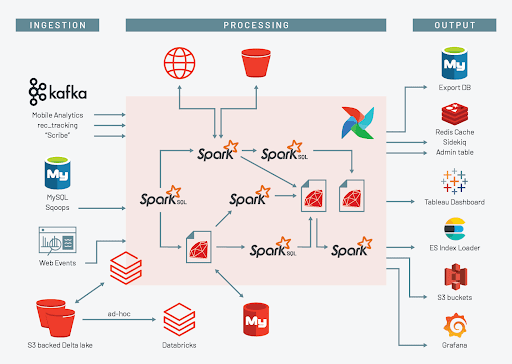

At Scribd, we've had a “conventional data platform” for some time: Hadoop mixed with HDFS and smattering of Hive. Over time the needs of the business have changed and we now need more machine learning, more real-time data processing, and more support for teams collaborating to deliver new data products; we needed something better than the “conventional data platform.” Our new data platform is a combination of Airflow, Databricks, Delta Lake, and AWS Glue Catalog, a powerful suite of tools that have already improved our development velocity and collaboration significantly. The transition from “the old” to “the new” has been peppered with equal parts successes and stumbles as we re-platform and rid ourselves of complexity and technical debt.

The legacy data platform is conventional in more than just the technologies we have deployed, it is also deployed on a fixed data center infrastructure. A static set of machines sitting in a data center rapidly churn through data during peak batch workloads, then waste money and energy when idle. As the company and our needs have grown, the “peak” of the data platform became more and more noticeable, and much more painful for developers. Some would fire off queries or jobs prior to heading out to lunch, or at the end of the day, with hopes that they would get their results upon their return. A particularly grievous anti-pattern I noticed shortly after my arrival at the company: some machine learning engineers would prepare their data sets, dump them into a personal AWS S3 bucket, launch GPU-capable instances, train their models, and then submit reimbursement requests to their manager at the end of the month.

If the developer horror stories weren’t enough, operationally things were arguably worse! Many conventional data platform technologies are difficult to combine with automation tools like Chef, etc, and as such our legacy data platform suffered from the lack of proper management the rest of our production infrastructure enjoyed. Every time we added more machines to the environment, the process would take a day or two depending on the request. As such, we would only add nodes when we really needed it, or when drive and system failures required it.

Our conventional data platform was wasting both developers’ time, infrastructure engineers’ time. I shudder thinking about what we could have done if all those talented people were instead working on projects that advanced our business objectives.

Modernizing our data infrastructure: evaluating options

By mid-2019 Scribd had hired up a “Core Platform” team, chartered with building out a “Real-time Data Platform”, and a completely new team of data scientists and machine learning engineers. The unanimous agreement from all parties invested in the legacy data platform was that we had to get “to the cloud.” Coupled with a company-wide initiative to migrate into AWS, our list of potential options was relatively short. We needed a data platform that worked well on AWS, relied on S3, would run our queries and Spark jobs well, could provide some semblance of self-service for developers, and could enable new machine-learning workloads we hadn’t even conceived of yet.

The options I looked at could be placed into two categories:

- “Looks like a conventional data platform, but with S3, and in the cloud!” (not very compelling).

- Databricks

Since we knew that our storage options were going to involve AWS S3 in some form or fashion, we had already started to look at how our massive data warehouse and workloads would interoperate with S3. For data platform usage, S3 is a great tool but it’s not as simple as its name might suggest. The eventually consistent nature of S3 can cause a number of problems for Parquet files accessed through a table-like interface (e.g. Hive). Some of those issues can be worked around with S3Guard but the additional architectural complexity made us a bit weary. Around this time we were lucky to have noticed the open sourcing of Delta Lake by Databricks. The initial evaluation of Delta Lake blew us away; we found our storage layer.

Delta Lake actually ended up being the gateway to Databricks, which wasn’t part of our initial compute platform evaluation. As we dug into Databricks more and more we found two killer features:

- Databricks Notebooks proved to be such a killer feature for developers and analysts, who had to date been collaborating by sharing queries to copy and paste into Hue.

- The optimized Spark runtime in Databricks, which helps execute queries and jobs even faster, helping us get developers their results as soon as possible.

Migrating Spark workloads to the cloud: calculating costs and benefits

In AWS, time very directly equals money. The sooner you can shut down a machine in AWS, the less money you will spend. With some help from our sales team at Databricks, I was able to come up with a cost model for our existing Spark workloads if we were to fork-lift them directly into AWS without significant changes. They claimed an optimization of 30-50% for most traditional Spark workloads. “I’d say 30% just to be conservative,” they mentioned. Out of curiosity, I refactored my cost model to account for the price of Databricks and the potential Spark job optimizations. After tweaking the numbers I discovered that at a 17% optimization rate, Databricks would reduce our AWS infrastructure cost so much that it would pay for the cost of the Databricks platform itself.

After our initial evaluation, I was already sold on the features and developer velocity improvements Databricks would offer. When I ran the numbers in my model, I learned that I couldn’t afford not to adopt Databricks!

In production

The road from our data center-based data platform to Databricks is a long one, which we’re still traveling. As of today, we have backfilled our entire data warehouse into Delta Lake, a migration which conveniently addressed the egregious small files problem we had in HDFS. We have a little bit of custom tooling which is keeping data in sync from the data center to Delta Lake while we begin to move over some of our heavier-weight batch processing tasks. Despite the long road of batch-task migration ahead, we already have new projects deploying directly on top of Databricks:

- Ad hoc internal user queries, once serviced by Hue and Hive, are being replaced en masse by powerful Delta Cache-enabled clusters. Those who previously spent much of their time in Hue are already excitedly collaborating with their peers via shared notebooks.

- New Spark Streaming/Delta Lake projects are already in production. Workloads that were previously not possible have already been developed and deployed. For some data streams we have flowing into Kafka, we have deployed Spark Streaming applications to bring that data directly into Delta Lake, where jobs and users are querying data within minutes of its creation. For some of these workloads, users previously had to wait for 24 hour batch cycles to complete, but now they’re getting fresh production data from Delta Lake within 2-3 minutes.

When many have seen this in action, their thinking immediately has turned to “what data can I change to streams to get more real-time insight?” Suddenly there are multiple projects in team roadmaps which include either “produce data stream for X” or “process data stream from Y”.

To me, success for a team delivering data infrastructure and tooling is when your users are both excited to answer their questions using the infrastructure, and when they start to conceive of wholly new problems to solve with the platform. Suffice it to say, I’m already pleased with our results!

Everything isn’t quite as rosy as I would like, however: our administrative policies are still lagging behind the exuberance different teams have had with adopting Databricks. We have given developers fantastic tools to solve their problems, but we don’t yet have policies in place to prevent those users from launching oversized or overpriced clusters. The capabilities to do so exist in the platform, but when considering the cost of some EC2 waste compared to people’s time, we have erred on the side of getting clusters into peoples’ hands as quickly as possible for now.

Doing it better next time around

Re-platforming a massive data infrastructure is not easy. Our biggest pain points to date have been self-inflicted. We had a significant investment in Hive and Hive-based queries, with an assortment of custom UDFs which all needed to be migrated over to Spark and Spark SQL. We wrote some tools to help us automatically convert Hive queries and templates to Spark SQL, which managed to automate converting ~80% of those Hive workloads. The other 20% we have to convert manually, which is frustrating work.

The migration process also uncovered more technical debt than I’m proud to admit. Numerous Spark workloads still rely on Spark 1 (!), jobs which were not using Hive’s table interfaces but speaking directly to HDFS instead (!), and testing big batch jobs for which the original developers neglected to write any tests (!).

Personally, I’m looking forward to the day when the first new employee to join who never has to see the legacy data platform. They will be gleefully unaware that there was once a time when you had to copy and paste query snippets into chat, wait until tomorrow for fresh data, or waste hours idling while your jobs completed.

Hear R. Tyler Croy talk about Scribd’s transition into a streaming cloud-based data platform in their upcoming session at SPARK + AI Summit. Register for free here.

Scribd is also hiring talented remote engineers to help change the way the world reads, learn more at tech.scribd.com

Never miss a Databricks post

Sign up

What's next?

Technology

December 9, 2024/6 min read

Scale Faster with Data + AI: Insights from the Databricks Unicorns Index

News

December 11, 2024/4 min read